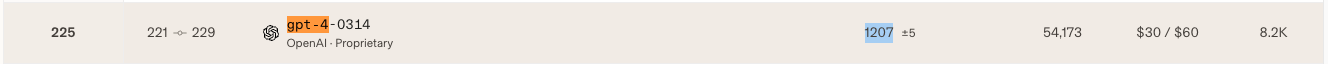

GPT4-0314

For the locally run model, we refer to the Language Model alone, not augmented with search/RAG/function_call. It needs a minimum throughput of 4 tokens/second

Not sure what benchmarks people use in 2026, but let’s say LMSYS Arena for the moment. Will change depends on the trend.

Current SOTA:

I am not sure Phi3(3.8B) can fit on a phone. If not, the current bests are MiniCPM and Gemma 2B

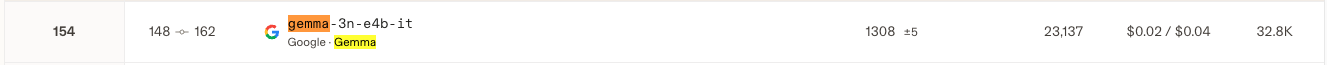

Update 2026-03-15 (PST) (AI summary of creator comment): The creator is resolving YES based on Gemma 3n being a phone-runnable model with an LMArena rating of 1308, which beats GPT-4-0314's rating of 1207.

Update 2026-03-15 (PST) (AI summary of creator comment): The creator is resolving this market YES, citing Gemma 3n as a phone-runnable model with an LM Arena rating of 1308, which beats GPT-4-0314's rating of 1207.

🏅 Top traders

| # | Trader | Total profit |

|---|---|---|

| 1 | Ṁ460 | |

| 2 | Ṁ126 | |

| 3 | Ṁ81 | |

| 4 | Ṁ63 | |

| 5 | Ṁ57 |

People are also trading

@Sss19971997 hmmm interesting... my intuition would be that there's rating inflation in all Elo systems as more models are released! You can't just compare an Elo rating now with one from 2 years ago... but LMArena implies that the old rating is still active? Even though it's likely that no one had reviewed GPT-4 in years.

Gemma 2 it 9b is already higher on llmsys arena. Only 2 points though.

Upd: oops sorry was thinking that criteria is <10b, confused with different question

I'm guessing this means any consumer cellphone? E.g. if a model that fits in 32GB RAM beats GPT-4 and there's only 1-2 phones with that much RAM in 2026 (current record is 24GB), this resolves Yes.

@Magnus_ Great question...

How should we specify this? I am thinking that it can do RAG for everything inside the phone but no internet connection. What do you think?

Currently, a phone can hold up to 512GB. It is a lot of info, but not the whole internet.

This criterion captures the "local" aspect.

how you think?

@Magnus_ I thought about it again. For a fair comparison, we should have the same standard for the local mobile LM since GPT-4 is not using any RAG/search/tools. I have updated the criterion.