Resolves according to my subjective judgement. I will welcome stakeholder input when resolving the question but reserve the right to resolve contrary to the opinion of market participants if necessary. I reserve the right to bet on this market but tentatively expect to stop betting in the last two years (2026-2027).

"Popular" being a loosely defined combination of public attention / resources, total adherents, and references in social discourse.

Resolves as N/A if there doesn't seem to be a clear answer at close.

Related markets from me on subjective popularity:

Related markets from me on effective altruism:

People are also trading

In retrospect, with no offense to the OP, joining markets with subjective resolutions is dumb. There's no reasonable way to predict the OP's biases in 2027, beyond basic honesty in a case where EA has dissolved entirely or sponsored a genocide or something. I wish I hadn't gotten into this one.

Becoming more optimistic – EA has recently had scandal after scandal after scandal, yet we seem to be weathering the storm okay, and group leaders at elite universities say recruiting of new members is still strong. Add to that the potential to make inroads in countries outside US/UK, and if AI becomes a bigger deal then that may make some EA ideas more reasonable to a greater population.

@DanielEth I think the greater population understands instinctively that "1 life = 1 life" utilitarianism as advocated by hardcore EA members just does not work. No one wants to be donating to an ineffective charity, but local helping and advocacy is not ineffective - even if it's less lives per $, it has compounding effects on that community in the future, and inspires people to do good.

It seems like EA has moved from this balanced perspective to the longtermism, where they just make up probabilities and use it to justify funding organizations that aren't even effective in the first place. This will prevent the public from associating with it, along with the FTX scandal. I could see it getting bigger, but not mainstream.

Manifold in the wild: A Tweet by Carson Gale

@KellerScholl @thephilosotroll https://manifold.markets/CarsonGale/will-the-effective-altruism-movemen-01f70cdf45f6?referrer=CarsonGale

This is not a fair minded article, but it's Wired

https://www.wired.com/story/effective-altruism-artificial-intelligence-sam-bankman-fried/

"This philosophy—supported by tech figures like Sam Bankman-Fried—fuels the AI research agenda, creating a harmful system in the name of saving humanity"

@FlawlessTrain A really weird article, given all the EA commentary I've read that has the same concern about car-race dynamics causing AI companies to take risks.

Most community building efforts seems to be mildly to wildly successful, and early EA aligned people have gone on to achieve pretty cool things (Our World on Data) , normalisation of challenge trials ect,. If this track record was to continue, I would expect EA to continue to attract talented ppl.

I am oft concerned of some draw backs of community building, but predicting "No" is essentially shorting a young companies' stock with a good track record of innovation, and low stock-price. I would give <15% of No

Question to OP would be, how would you resolve this if EA splintered, into multiple groups?

@ElliotDavies I could be convinced, but my intuition is that the resolution will depend on groups that specifically label themselves EAs. I.e. a group called the Heroin Rat Club that cites EA principles would not count unless they also called themselves EAs.

@CarsonGale What about if the founder/founders were previous prominent members/organisers of an local EA group?

@ElliotDavies The question involves EA specifically, so any other groups are only relevant to the extent they are affiliated with EA.

@CarsonGale I take this reply to mean upon resolution: that splinter groups, taking with them members of EA, would effectively reduce the size of EA

@ElliotDavies If they do not self-affiliate with EA, that would be correct. E.g., a rationalist that doesn't identify with EA (even if rationalists are associated with EA - you wouldn't just add up all rationalists and add to the EA count)

@CarsonGale I think of rationalists more of convergent evolution, than a descendent of EA

Tbc, you're free to resolve as you wish, I was just considering ppl who came from ea but splintered off, to be ea

At the moment resolution seems sensitive to these issues, in addition to name-changing issues

@ElliotDavies Sorry for any confusion - hopefully at this point it's clear. This market is intended to cover self-identifying EAs.

@MartinRandall Under "Market Details", it says:

Market created Oct 21, 2022, 8:29:12 AM MST

So I assume that's the baseline.

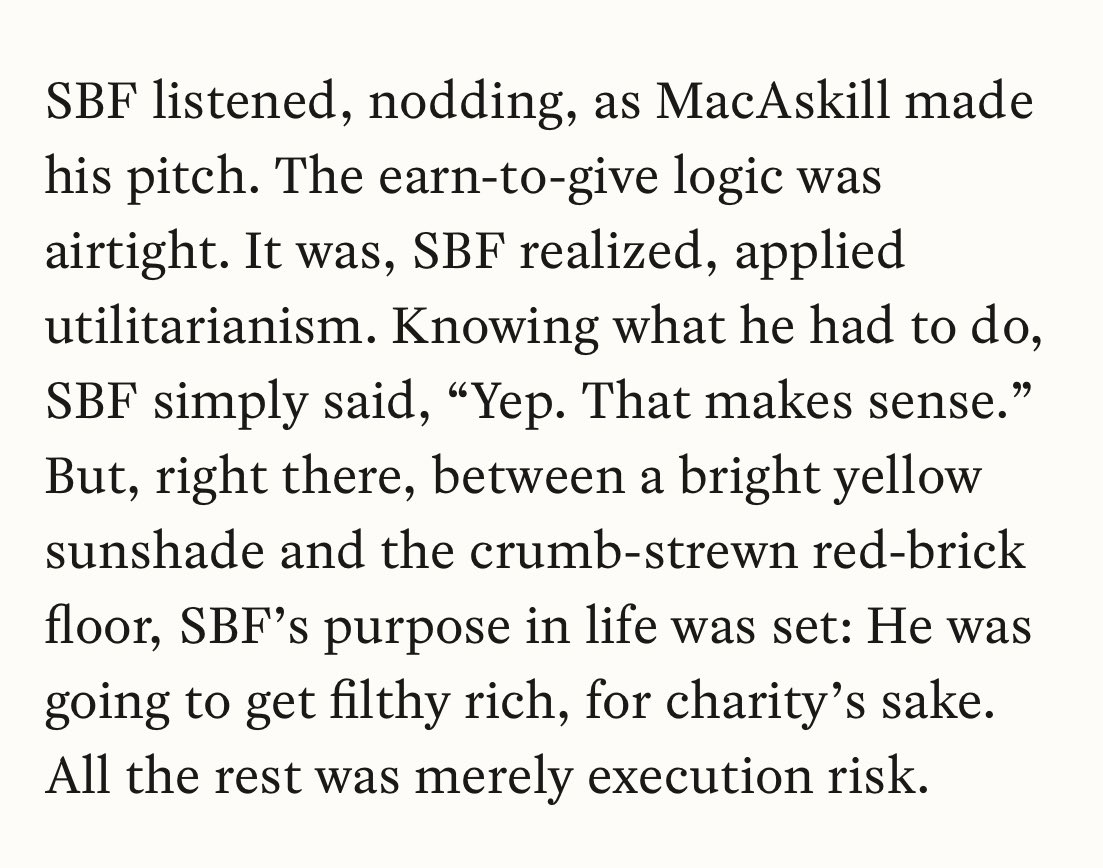

Noticing Marc Andreessen, David Sacks, and AGM had some things to say, Tyler Cowen is still a statist, and the EA forum has distanced itself from the entire mission on which SBF set out—to profit at all costs and give it to stuff he kinda liked

EA is a good meme, being as it has nothing to do with either effectiveness or altruism (even the original malaria stuff neglected that maybe an overpopulated world with IQ collapsing due to dysgenic reproductive trends exacerbated by valuing all lives equally isn’t exactly a world capable of great things much less survival, and much of the “AI risk” stuff is a clownish proto-religion)

Prediction: obviously the Ea movement will grow as all universalist movements do (cf. communism, wokism, and all the rest), but the critics who do not subscribe to rigged left-wing morality masquerading as the “highest good” will be more vocal.

Under the criteria listed here—that’s going to be “more popular” but no longer as naive, above reproach, or able to be so cringe as this;

Further prediction: new thought leaders emerge, causes continue to move from the lowest tiers of maslow’s hierarchy of needs to higher ones (survival first, recently security, next affiliation/belonging) until it rediscovers and converges on conventional morality that has stood the test of time.

(if you want to be one step ahead, look at middle tier causes, as the “survival” stuff is passé, the AI apocalypse and Gretaism are no longer cool, and frankly social trust could use some repairing anyway)