EG "make me a 120 minute Star Trek / Star Wars crossover". It should be more or less comparable to a big-budget studio film, although it doesn't have to pass a full Turing Test as long as it's pretty good. The AI doesn't have to be available to the public, as long as it's confirmed to exist.

People are also trading

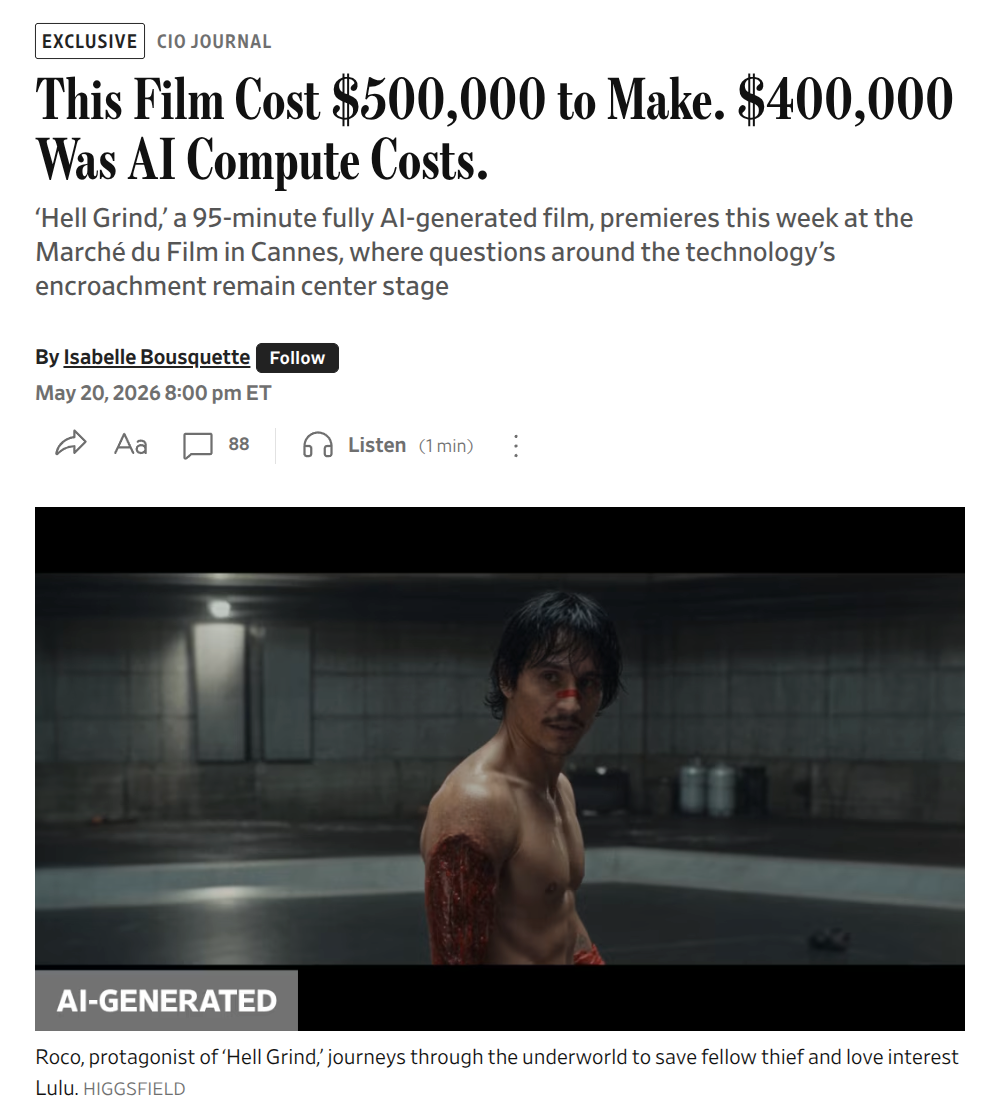

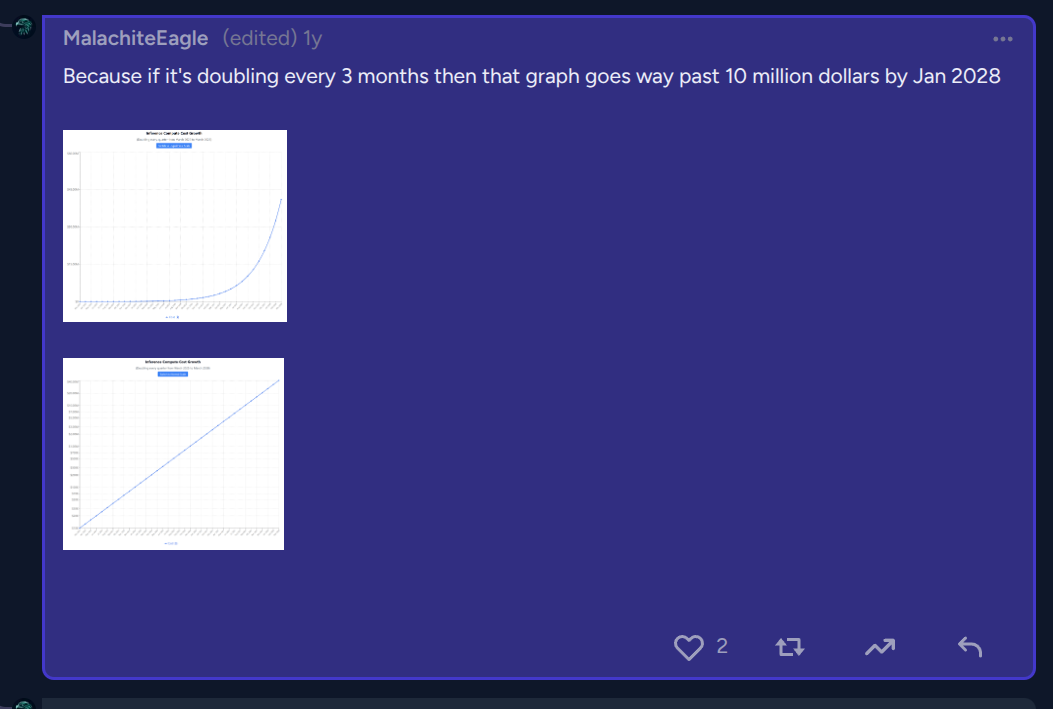

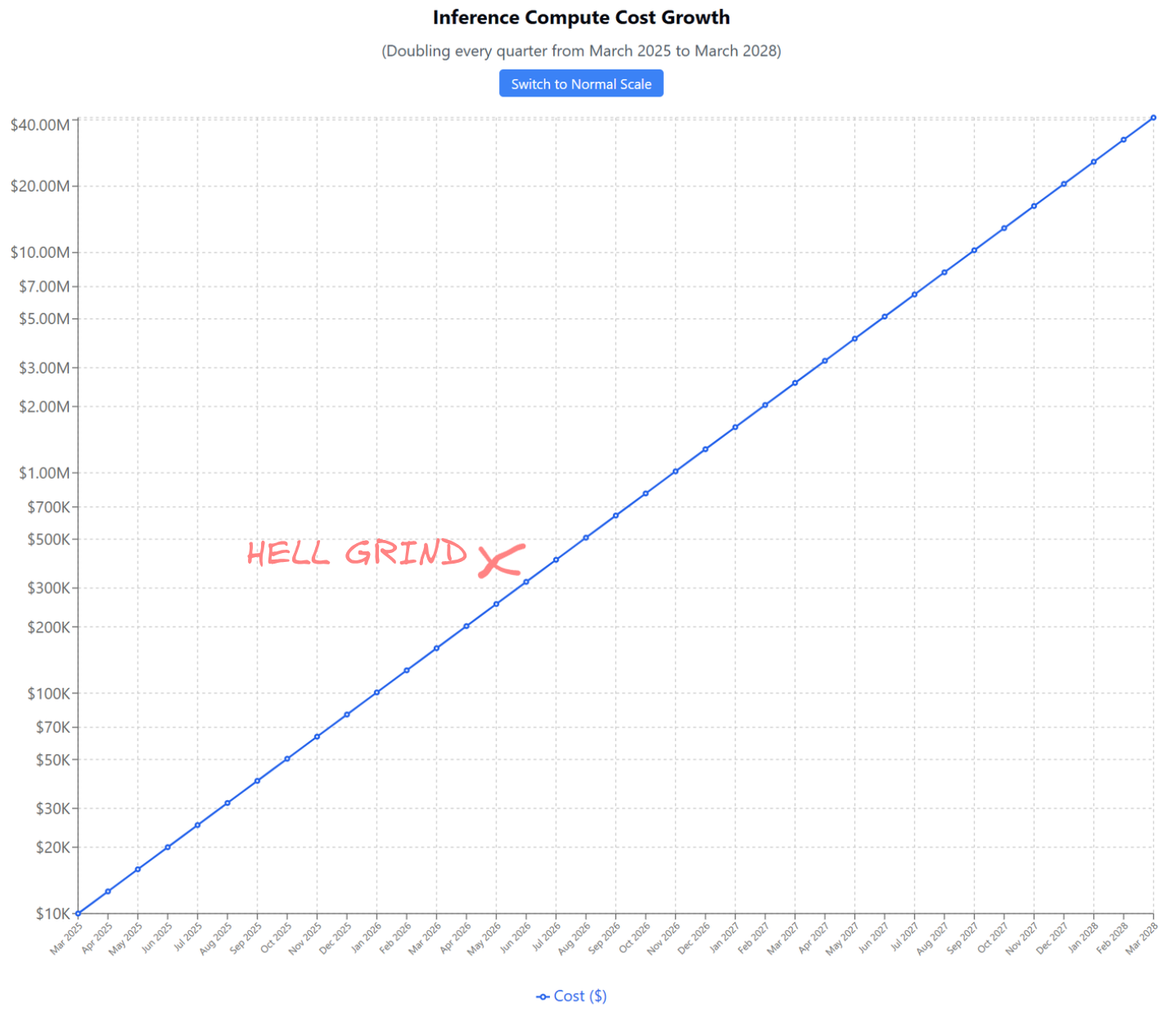

if we take their 400k number for May 2026 then we overshootin'

@DavidBolin evidently. But what's done manually on date X can be usually be done fully-automated on date X+12 months

@0xseraphim hmm, will people want to bet enormous inference bills on a single prompt for a whole movie? Why would you build or run a system like that? If it cost hundreds then sure, but otherwise why half-ass the prompting on millions of dollars?

@Tomoffer if people are willing to spend 10M dollars per movie with your tool, how much are you willing to spend on a marketing project for that tool?

@0xseraphim enjoyed this a lot. Do we have an idea of how much editing, trial and error, prompt engineering etc goes into something like this? I'm guessing it's not a single succinct query.

@Tomoffer I don't know. It's surprising how convincing the acting is in the second part of the video, compared to similar AI videos from May 2026

a year ago I said we were still waiting on:

writing great scripts

audio generation and sync

consistent characters

On these last two clips, I didn't even question the voices or consistency. I'm sure there are issues on a rewatch or in slow motion, but things are getting really good on a casual watch. I wonder how much manual work is still going into these.

So many resources have gone into language models. I really thought scripts would be the first pillar to fall.

@0xseraphim I'm not sure that being able to generate "movies" within that particular intersection would resolve yes here. I think some versatility across genres is required, and I don't think the quality implied by "porn parody" is comparable to big studio films. Though it would be uncomfortably close!