Home grown means the model should be trained from scratch by a Chinese company. The computer can be outside of China, can use any sort of hardware, the data could from anywher, but the model should not be a distilled or finetuned version of open source models developed by other country, but should be well-known for trained from scratch by the company itself.

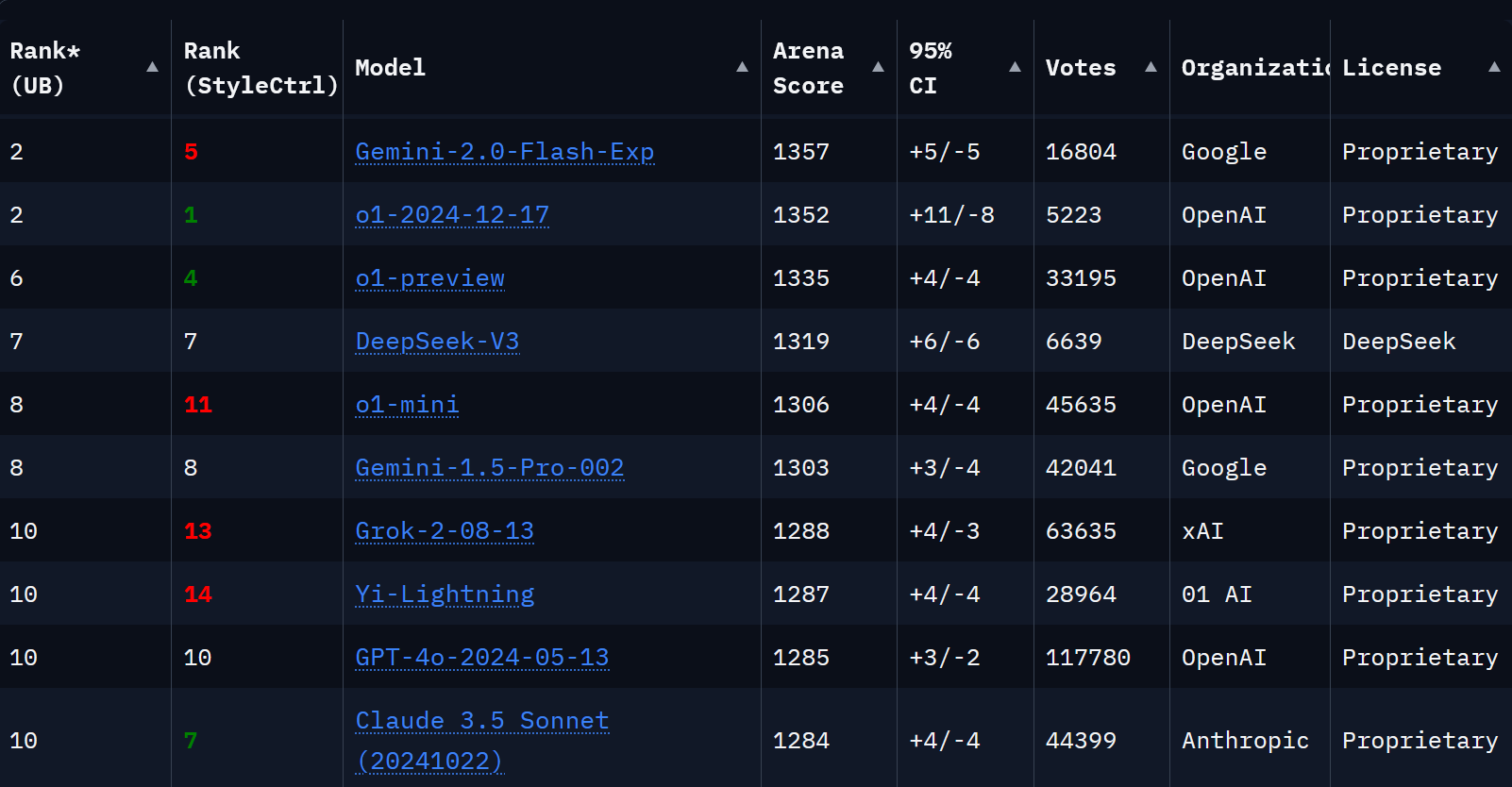

Competitive means the home grown model leads or ties to one of top models from OpenAI, Google, Anthropic from the Chatarena board.

🏅 Top traders

| # | Trader | Total profit |

|---|---|---|

| 1 | Ṁ762 | |

| 2 | Ṁ642 | |

| 3 | Ṁ199 | |

| 4 | Ṁ169 | |

| 5 | Ṁ95 |

People are also trading

@Eliza Should resolve as YES. There's a new Chinese model called DeepSeek v3. It clears the bar by beating Anthropic's strongest model Claude 3.5 Sonnet (ELO 1319 vs 1284, see https://lmarena.ai)

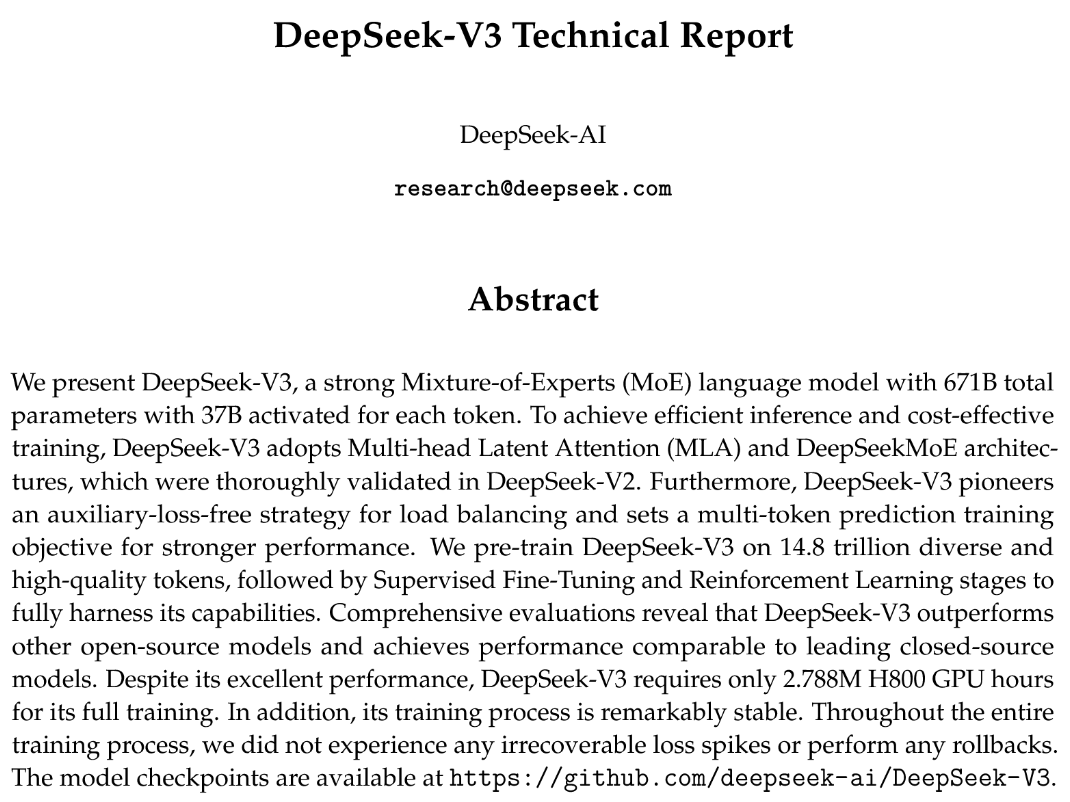

It is not a distilled or fine tune version of an open source model developed by another country. The company behind it released the model (it's unlike any other open source model) and meticulously described the training process used to train it from scratch, see https://github.com/deepseek-ai/DeepSeek-V3/blob/main/DeepSeek_V3.pdf

The company that trained the model is based in Hangzhou, China, see https://en.wikipedia.org/wiki/DeepSeek

@ChaosIsALadder I don't fully understand the entire question but your provided data looks convincing enough. I'll resolve this now but if someone shows up tomorrow claiming you were hoodwinking us, we can always unresolve it!

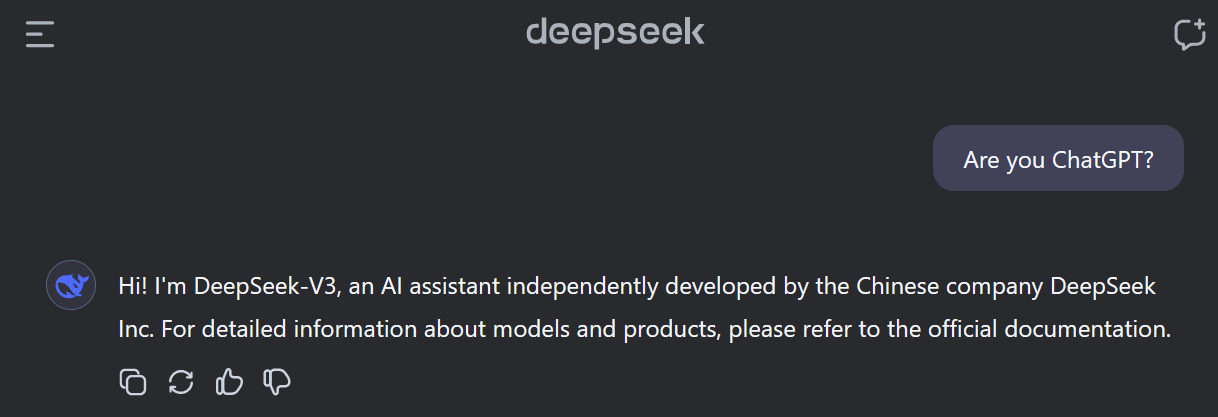

@ChaosIsALadder why does it think it's chatgpt when asked? I thought they were training on chatgpt. Is it just too in the training data now? Or did I hear wrong that it thinks it's chatgpt when asked?

@AndrewMcKnight Are you sure you asked the correct AI? I tried asking the model on https://chat.deepseek.com if it's ChatGPT and it responded it's not. DeepSeek also has its own training data consisting of 14.8 trillion tokens of text, which has nothing to do with ChatGPT.

@jacksonpolack i don't know how this market should resolve or not, but I know the original market creator specifically created this market in contrast to this one: https://manifold.markets/Ledger/will-china-be-competitive-in-the-ll. Market creator has several comments there explaining their thinking.

@jacksonpolack creator already deleted his account. He accused yi-lightning of being a distillation of llama. At the time, GLM-4 also was ranked higher than anthropic. So either this market should’ve resolved yes or all Chinese models are distillations of llama.

Yi-34B had the same architecture as llama but afaik there’s no evidence to suggest yi-lighting was a distillation of llama 3.1

@jacksonpolack Having read the previous thread, I think that the creator wished to depart from the previous question by requiring the model to not be a distillation/finetuning of another model. In this question, “well-known for trained from scratch” is an important phrase which, at least to my limited knowledge, isn’t met yet, as it’s at best opaque as to the training procedure.

tl:dr: I think the market has not met the criteria for YES.

Can you clarify if the decision is made based on the state at 2025/12/31, or if at some time before then the “competitive” criteria is met for some duration.

Does the Yi Lightning model count, and if not, what exactly is the threshold? It is beating GPT 4o and Claude 3.5 in Chatbot Arena.

@mods OP’s account is deleted, but (depending on interpretation) this question might already have met criteria to be resolved Yes.

Edit: compare to this very similar question that resolved yes once Yi Lightning hit the leaderboards: https://manifold.markets/Ledger/will-china-be-competitive-in-the-ll