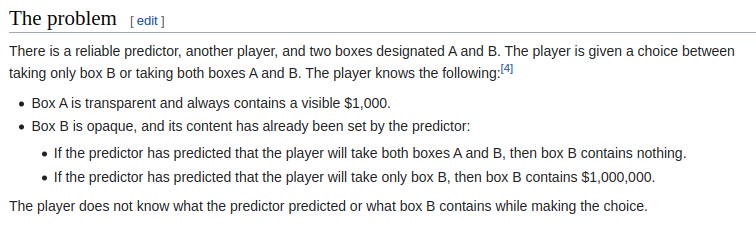

https://en.wikipedia.org/wiki/Newcomb%27s_paradox

Assuming you want to mazimize amount of money.

Resolves to whichever position I find the most convincing at market close after having considered and engaged with some of the arguments posted. (I will only respond to arguments that I think will add anything to the discussion)

I will not bet in this market or share with anyone what my current position is. However, I will play devil's advocate against some positions in the comment section.

In case of disagreement on definitions I will decide on the definitions that seems the most reasonable to me after having considered people's arguments.

Closes January 13th

🏅 Top traders

| # | Trader | Total profit |

|---|---|---|

| 1 | Ṁ98 | |

| 2 | Ṁ64 | |

| 3 | Ṁ34 | |

| 4 | Ṁ9 | |

| 5 | Ṁ0 |

People are also trading

I'm not too sure whether I think the best answer would be "Take B" or "Other". I agree with Jack's analysis that if we take B then our expected payoff is higher.

But it seems to go against the intuition that since the contents have "already been set by the predictor", our decision can't causally affect the contents of the box. It seems like if you can choose between B or A+B without affecting the contents of the box then you should choose A+B. I think the problem is that you don't have a free choice at all. Your decision is acausally linked to the contents of the box, if they were able to decide what should go in the box, then they were able to decide what you will pick. The existence of a reliable predictor requires the non existence of free will.

So while I think the answer is "Take B" in the sense that you'll be happiest in the world's where you take B, I think framing it as a free choice kind of contradicts the required assumption of no free will for a reliable predictor to exist in the first place, so I think there's some case to made for "Other".

@Preen "Our decision can't causally affect the contents of the box" is definitely the biggest concern, and is why when I initially saw the problem I thought two-boxing was a dominant strategy. But after learning more about the topic, I think that statement might be technically true but isn't quite the right way to think about it, and here's how I like to reframe the question to build up intuition for a counterargument:

First, let's say you are designing an AI to play a variety of game theory games. You need to decide how to program the AI to make decisions - what decision algorithm it should use. That decision algorithm causally affects the contents of the box. So I think from this framing, it's clear that you want a decision algorithm that chooses to one-box, and there's no causal weirdness. The causal graph is as follows (arrows mean thing A causally affects thing B)

decision algorithm -> box contents

| |

v v

decision -> outcome

Now, what about us as humans? I think we actually can think about it in a very similar way. We can in fact reason about what decision algorithms to use - coming up with evidential and causal and logical decision theories are examples of that! I personally used to think causal decision theory was correct, and when I initially saw the Newcomb problem I had a strong intuition that two-boxing was correct, until I learned more about it and then learned that there were formal decision theories that improved on causal decision theory - then I changed my mind and became a one-boxer.

Right now we are doing this reasoning before the box contents are set (talking about a hypothetical future Newcomb problem), so it's easier to see the causal arrow from the decision algorithm to the outcome. If you're doing the same reasoning in the middle of the problem, then the questions that you raise about what free choices / free will means start to become thornier, but I'm generally not too worried about those. Newcomb-like problems actually do exist in real life, and people can predict others' actions with better-than-chance accuracy (it's not at all necessary in the Newcomb problem for the predictor to be perfect, one-boxing is still the best payout if they are a little better than chance at predicting). I think the Parfit's hitchhiker problem statement is a more realistic and intuitive one: The problem (slightly abbreviated) is you're about to die stranded in a desert, and someone will save you only if you promise to give them $100 afterwards, and they believe your promise - even though you are free to break your promise later. If they are even slightly better than chance at predicting whether you will keep your promise, and you value your life much more than $100, then you want to make a believable promise, and that probably means that you want to end up paying them $100 - maybe you could get away with breaking the promise, but is it worth the risk of them being slightly less likely to believe you and therefore leave you to die?

@Preen I have two arguments:

1) Ithink the sleight of hand here is in the question definition. The existence of a “reliable predictor” as defined in the question restricts the set of worlds in which you might be playing the game.

If such a predictor can exist, it means it’s /possible/ to predict questions in advance with perfect accuracy. Either because free will doesn’t exist, or for some other sneakier reason. It’s not necessarily a question in our universe if you believe in free will.

However, it’s not necessary to the math that it be strictly impossible for the predictor to predict your actions. If you imagine the predictor is wrong some epsilon of the time, and work out the graph as though you make a decision about what to do, and then they try to predict what you’ll do, and then you make the choice, there’s a small enough value of epsilon that makes it worth convincing yourself to take one box beforehand.

There are more complicated arguments for the case where you don’t get to work out things ahead of time.

2) If you don’t believe you should take one box, you are intending to try to trick the predictor in the future. That plan is very unlikely to succeed if you publicly commit to not believing you should take one box.

The optimal decision algorithm or decision theory is the one that would result in the highest expected money (since we're assuming that utility = money in this problem).

We can contrast from the statement of causal decision theory, which says that the optimal decision is the one that results in the highest profits. They're operating on different levels of meta, and causal decision theory gets the wrong answer because it doesn't properly model the fact that the decision algorithm affects the box contents.

This is formalized in logical decision theories: https://arbital.com/p/logical_dt/. These decision algorithms correctly choose to pick box B only (which is in fact the best move).

Resolves to whichever position I find the most convincing at market close after having considered and engaged with some of the arguments posted. (I will only respond to arguments that I think will add anything to the discussion)

I think regardless of what it is correct to do in the situation if you haven't previously considered it, it is correct to have been convinced to only take B prior to encountering the situation. That doesn't even involve arguments about causal direction or whatever; it is good to have experienced being so convinced, because in all future situations you will get the higher payout. So you should be convinced in that way and resolve to B.

@MichaelLucy I don’t think that argument works out. It’s also possible you might encounter a situation where a reliable predictor gives you a reward if and only if it thinks you would take both boxes in Newcomb's paradox.

@1941159478 But that's a completely different problem - the claim is that you should have been convinced to take the action that would result in the highest profits. (See my other comment that I just added about logical decision theories).

@jack What I was trying to say is I don’t think you can get out of the gnarly decision theory debate that easily. I understood @MichaelLucy‘s argument to be that even if you are some kind of hardcore causal decision theorist, you should basically commit to only taking B right now just in case. But that commitment might have a cost in other situations.

@1941159478 That's a fair point. I think if you're a hardcore causal decision theorist, the thing you need to do is weigh the expected returns from being convinced / committing in this way, vs. the expected costs.

I think the expected returns are "better payouts in a broad class of games with agents who are really really good at modeling you". (I think the thing you should be convinced of is not this specific problem, but the general class of problems.)

I think the expected costs are "there might hypothetically exist an agent that is really really good at modeling you, and also punishes you specifically for having been convinced in this way".

I think the first situation is way more likely to actually exist at some point. I can imagine a bunch of good motivations for an agent to want to coordinate with other agents that it can model well.

I'm having a lot more trouble imagining cases where it makes sense for the second situation to exist. But that might just be lack of imagination, if someone has a good argument for why agents that punish one-boxers should be common I'd be interested.

It’s the least popular here so far, but as per the 2020 PhilPapers Survey taking A and B is the most popular among professional philosophers: https://survey2020.philpeople.org/survey/results/4886

I don’t really have anything novel to add to the debate, but maybe some people here will enjoy the dramatized version: https://www.princeton.edu/~adame/papers/newcomb-university/newcomb-university.pdf

Other: take a quantum random generator and apply strategy of taking only B 50.1% of the time and A and B 49.9% of the time. Predictor can't know the result of the quantum dice and its most rationnal option is to predict you will take only B.

If you don't have a quantum random generator, you have to assume you can either be in the real world or in the simulation that the predictor is running to know your choice in advance. As your behavior in the simulation will determine if B will contain one million $, you have to take only B.

However, if you have a special trick to know if you are in a simulation, you can take only B in the simulation and both A and B in the real world.

@Zardoru The reasonable reading of the problem is that we are thrown into the scenario as we are, and don't have a quantum random generator on hand. But if you carry a quantum random generator around with you in your daily life, then I agree that's the answer for you.

@EliasSchmied also there are standard variants of the problem that remove any motivation for using randomness. I'd argue that the spirit of the problem is that if you pick A about half the time, then B should contain half the value in expectation.

@EliasSchmied Actually, I always carry my phone with me so I can use https://qrng.anu.edu.au/dice-throw/

@Zardoru I think the standard variant for that is equivalent but with the clause “if you randomize anything they’re both empty”