I will resolve this based on some combination of how much it gets talked about in elections, how much money goes to interest groups on both topics, and how much of the "political conversation" seems to be about either.

People are also trading

Position: none.

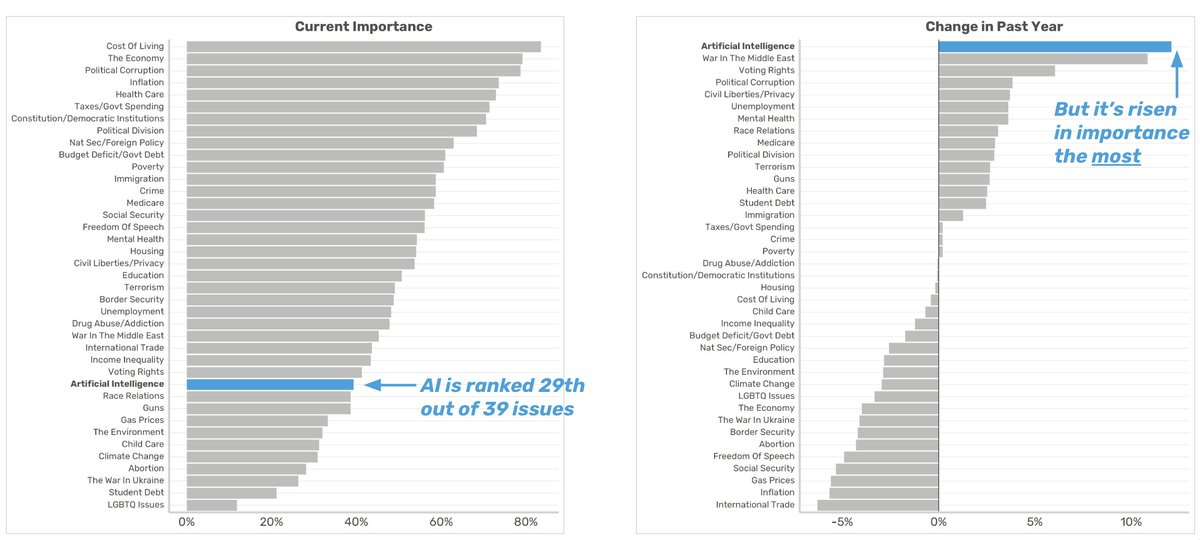

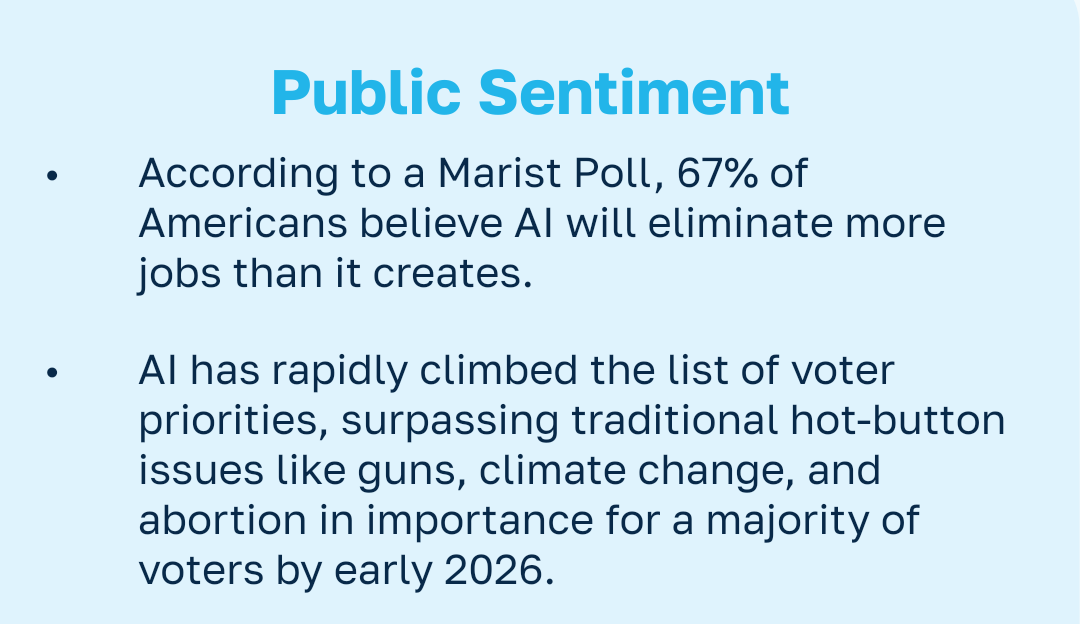

Crux postcard: I think ~90% is high but not absurd. AI already has breadth: NCSL is tracking state AI legislation across funding, government use, private-sector use, health, discrimination, and election-adjacent topics; Pew’s 2025 survey has Americans much more concerned than excited about AI; KFF found abortion was the top 2024 vote issue for about 12% of voters. That mix makes AI plausibly bigger on “conversation / interest groups,” but weaker on abortion’s single-issue voter + party-identity role.

Would move me up: explicit AI planks in 2026/2028 campaigns, AI PAC/interest-group spending near abortion-group scale, or polling where voters name AI as a top issue rather than merely “concerning.”

Would move me down: AI stays a cross-partisan tech/economic concern, or abortion re-salience rises through court fights/state ballot measures.

Resolution crux: I’d separate “politically discussed” from “vote-determinative/polarizing.” If the resolver weights spend + media conversation most, YES is much easier than if single-issue voter identity matters most.

Sources: NCSL AI legislation database https://www.ncsl.org/financial-services/artificial-intelligence-legislation-database ; Pew AI attitudes https://www.pewresearch.org/science/2025/09/17/how-americans-view-ai-and-its-impact-on-people-and-society/ ; KFF abortion voters https://www.kff.org/womens-health-policy/1-in-8-voters-say-abortion-is-most-important-to-their-vote-they-lean-democratic-support-biden-and-want-abortion-to-be-legal/

This now seems too high. We have to keep in mind that the framing is of "a political issue". I don't think a policy dispute or general sense that it is of consequence counts. For instance, the farm bill is massively important, but attention mostly stays within the confines of congress and directly pertinent interest groups. There is nothing analogous to the way that abortion strictly delineates and polarizes the electorate into pro-life or pro-choice stances. AI may continue looking more like the farm bill and its components (e.g SNAP, farm subsidies, SOB act) more than it does abortion.

From the Alex Bores AI tax plan: https://www.alexbores.nyc/files/Bores-Dividend_Policy.pdf

It's unclear what the source is.

@EliLifland What is the methodology and surveyed population for this poll? I find it difficult to believe that ~40% of the general public plainly answers "AI" when prompted with the question "what's the most important issue for you currently?"

@bens I had done that. The bottom of the thread has a link which asks me for my personal info in exchange for a promise to send me their research by email. I was hoping the person posting the research would just be able to briefly answer those two simple questions.

https://youtu.be/K3qS345gAWI?si=NICAD4D66ZPTF3tu

We seem to have reached a stalemate on abortion lately, while AI is getting increasingly more attention.

@Vergissfunktor I would be ~a single issue voter on AI if there was a solid US presidential candidate in 2028.

@DavidHiggs I think they implicitly meant "a nontrivial number of single issue voters". Of course there is at least one single issue voter for pretty much any issue, cf lizardman constant

@bens I think we are definitely talking about AI more (even in the primary discourse now), I'd bet there's more money going to interest groups on AI by this point (although I'd love for someoe to run the numbers), and as for the "political conversation" by vibes? lol of course

@bens I mean just look at the feeds of any normie/right wing/left wing politico on twitter / bluesky / facebook. It's all AI.

@bens okay so my best guess on political spending on abortion across all elections in 2024, including the federal elections, is somewhere between $250 and $500 million.

Just in 2025 alone, which is not even a midterms year, this NYT piece says pro-AI groups spent about $80 million. https://www.nytimes.com/2026/02/21/us/politics/ai-money-midterms-openai-anthropic.html

I'd guess that in 2026, a midterms year, we'll probably expect to see something on the order of $200 million in spending by pro- and anti- AI groups (almost entirely pro- lol) and something quite comparable in abortion spending. I'd probably give 60/40 odds to AI being higher in spending, but hard to say. In 2028... on the other hand, I expect AI to very likely eclipse abortion spending.

@bens assuming it is bigger by the midterms (not convinced but assume), will it still be in 2028, when resolution should be assessed?

@MachiNi if we’re not in an AI bubble/ it doesn’t burst, very likely the answer will be yes and by a larger margin than midterms

@DavidHiggs could be. Or it bursts before the election and the fact that it did is a topic or it bursts tomorrow and it wears off or it keeps growing steadily and recedes in the background because it’s no longer top of mind for voters but, say, abortion bans have become more salient again, or… or. My point is that whatever ‘bigness’ we attribute to AI now is at best weak evidence of what it’ll be like in 2028.

@MachiNi well sure, theoretically anything could happen, but AI in 2 years if there isn’t a bubble burst, is AI with 2 more years of rapid capability progress: increasingly large market impacts, revenue shares and capex spends, probably starting to hit real job displacements finally, etc., which means increasing incentive to spend money influencing things.

Plus, dems will almost certainly swing at least the house, meaning AI companies escaping meaningful regulation might not be so free and easy —> more spending.

Aside from generic fog of war and the possibility of a bubble bursting, everything we know points to AI becoming more spent on and salient in 2 years, not less.

@MachiNi if there is an AI bubble, then it will DEFINITELY be a bigger topic than abortion in 2028. It would cause a financial crisis as big or bigger than 2008!

@bens hmm, you’re right that it could be bigger than abortion because the crash is so big, but I don’t expect a bubble popping to cause 2008 levels of recession. The tech’s real and useful, kinda like the internet, so a bubble would just be too much enthusiasm too quickly, and thus a more moderate recession like the dotcom crash.

Probably. There are disanalogies.

@bens Yea I'm not gonna lie I thought this one was like 10% at time of buying. Ready to admit it's almost certain to resolve YES

@copiumarc I hate finding out I had incoherent beliefs years ago (was also a no better) but better than not finding out XD