Will be decided subjectively unless I see a poll on this

People are also trading

@Gigacasting climate change might not cause extinction that soon, but it would cause a huge depression and kill millions of people. manifold is full of AI doomers, 40% probability of AI doom but only 5% probability of other doom is crazy to me. that being said i think by the American population AI will be seen as a bigger risk

@xyz I wanted to say the tsunami kind, but I'd imagine that an asteroid impact falls under this kind, and it's listed seperately, so... maybe they actually mean the pillar of fire kind?

@YoavTzfati Resolves exactly at end of 2028. I’m sure in the meantime some big event or other will get people worried about AI for a week. But I’m more interested in people’s general vibes rather than attitudes towards particular events

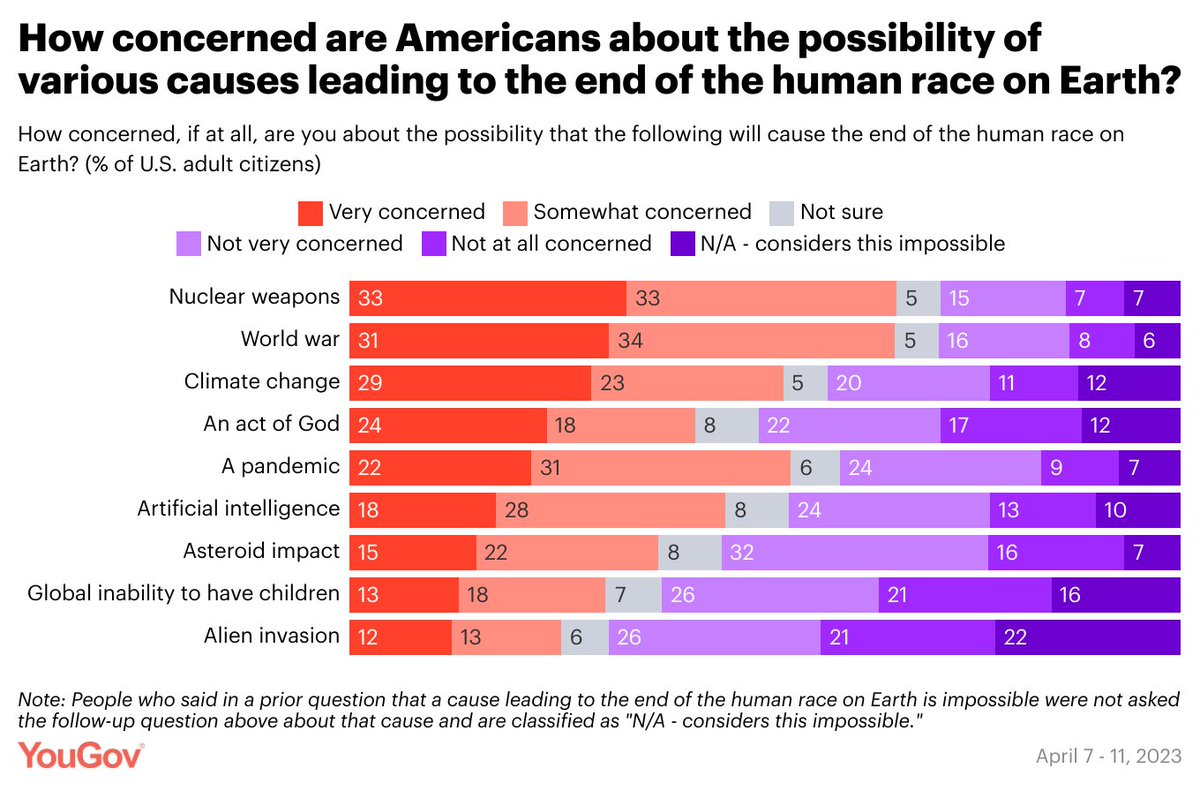

46% are concerned about human extinction due to AI.

39% think it’s likely climate change will cause human extinction

Cross-survey comparisons are awkward but possibly this is already true, and certainly seems likely to be true at some point between now and end of 2028.

How does this market resolve if we are extinct from AI by end of 2028? Seems like it should be YES, but N/A seems defensible too.

@MartinRandall Yeah, it’s unclear what “concerned” means to people in the above survey. I’d like to see a single survey that asks about both AI and climate change.

If we all go extinct by end of 2028, I’ll resolve this to N/A, since no one will be considering AI or climate change at all. I assume humanity would have at least a few seconds before then though, so in practice, the market would probably resolve YES.

@NathanNguyen ah yes, we can bet on that here.

@Zardoru A clear majority say the government should be doing more to combat it: https://www.pewresearch.org/science/2020/06/23/two-thirds-of-americans-think-government-should-do-more-on-climate/

I don't know what the opinions on the level of risk is.

@PatrickDelaney I expect energy consumption from AGI to be a rounding error in the next five years. And technological developments in transportation, energy storage, and energy transmission will improve in the meantime to lower carbon emissions

@NathanNguyen If you think AGI's power consumption will be a rounding error in the next five years, then that would imply the energy consumed by AGI will be considerably low, which means you might vote on the low side, depending upon what you mean by rounding error.

@PatrickDelaney I also think the carbon emissions of typewriters will be a rounding error. But I wouldn’t bet on how much energy it takes to produce a typewriter