Phrased in more detail: Will most philosophy-literate people judge curated pieces of AI generated philosophy as on par with ideas drawn from the Stanford Encyclopedia of Philosophy (SEP) ?

In late 2025, I will recruit three people (henceforth termed judges) who have philosophy degrees, or are currently enrolled in philosophy. Each judge will provide the next judge with (<1 page) summaries of three ideas drawn from SEP posts which the judge is unfamiliar with. I will also pick (in consultation with the previous judge) three philosophical ideas generated by an AI -- curated to maximize likelihood of success. The judges will read all four ideas (3 SEP, 1 AI), and rank them in order of how impressed they would be if a peer had come up with the idea. If the AI generated ideas rank at, or higher, than 3rd out of 4 the judge will be considered to have passed the AI. If two or more judges pass the AI, then this resolves as Yes.

The AI ideas must be original, meaning as least as distinct as the difference between sections of the SEP. If there is reasonable doubt as to whether the AI generated text meets this bar in 2025, I will run the test anyway. Each summary provided to the judge will also provide some discussion of views developed prior to the idea, so the judges will be in a position to judge the creativity of the AI's work conditional on pre-existing work. Any amount of prompting, fine-tuning, etc. is allowed. However, in-context information (e.g. prompts) must not include ideas from the same subfield as the generated idea.

The SEP ideas in question will be selected to have varying interest levels, as judged by the curating judge and me. The ideas must be concisely expressible, e.g. things like Kripke's Hesperus thought experiment, the EDT/CDT distinction etc. could qualify.

To be clear, it does not matter, for resolution, if the judges recognize which idea is AI generated. Nonetheless, I will attempt to summarize each entry in such a way that the source (AI/SEP) is not clear.

I may make minor adjustments to this protocol, but I will attempt to preserve the spirit as much as possible. For instance, SEP may be replaced with a better source if I find it hard to quickly curate self-contained ideas. But I will keep the principles of judge ignorance, non-adversarial nature etc. I may clarify the prompting criterion depending on how methods evolve.

Edit 19.11.25: I will stop trading on this market before I start running the human experiment. (Since I don't want this market's values to be distorted by people interpreting my betting as insider info)

Update 2025-12-13 (PST) (AI summary of creator comment): The creator has prepared judge instructions for the evaluation process. See the linked comment for the full details of how judges will be instructed to evaluate the philosophical ideas.

Update 2025-12-14 (PST) (AI summary of creator comment): The creator has completed the evaluation and is preparing to resolve the market. The test was conducted with judges Brad Saad, Marie Buhl, and Michelle Hutchinson. See the linked comment for full details about the results and process notes.

Update 2025-12-14 (PST) (AI summary of creator comment): The creator has completed the evaluation and the market will resolve NO. The AI-generated content placed 2nd, 3rd, and 4th across the three judges' scorecards. Since the resolution criteria required two or more judges to rank the AI idea at 3rd or higher (out of 4), and only one judge ranked it 2nd while the others ranked it 3rd and 4th, the market does not meet the YES criteria. See the linked comment for the full judges' scorecards and rankings.

🏅 Top traders

| # | Trader | Total profit |

|---|---|---|

| 1 | Ṁ4,409 | |

| 2 | Ṁ1,204 | |

| 3 | Ṁ1,094 | |

| 4 | Ṁ638 | |

| 5 | Ṁ516 |

People are also trading

I ran this today. Big thanks to judges Brad Saad, Marie Buhl, and Michelle Hutchinson!

Some caveats and takeaways:

We were under time pressure, we took 3-3.5 hours total to do the writing, prompting and evaluation. Judges were allowed to get AI help summarising but of course when doing so did a pass and revised and edited themselves.

Some SEP content is low quality

Adjudicating novelty of AI generated content is hard, mostly just because adjudicating novelty of any philosophical claim is hard!

Judges had heard of some of the SEP ideas before, we weren't perfect on enforcing judges being unfamiliar with the SEP excerpts. I think around 66% were unfamiliar.

The good news is that after doing this, all of us (judges and me) agreed that this is 'fair' evidence. In the sense that, we couldn't tell which direction (more AI or more SEP favored) a more carefully done, more time version would bias towards. Since both elicitation and SEP summary quality would've improved.

@JacobPfau A record of what the judges got to read and their rankings is here. Across judges scorecards the AI placed in 2nd 3rd and 4th https://docs.google.com/document/d/1acMm9iOpgo5Jkf4ryhsnZn3V4uuRyg24xBMjbAUSaUs/edit?usp=sharing

Two observations:

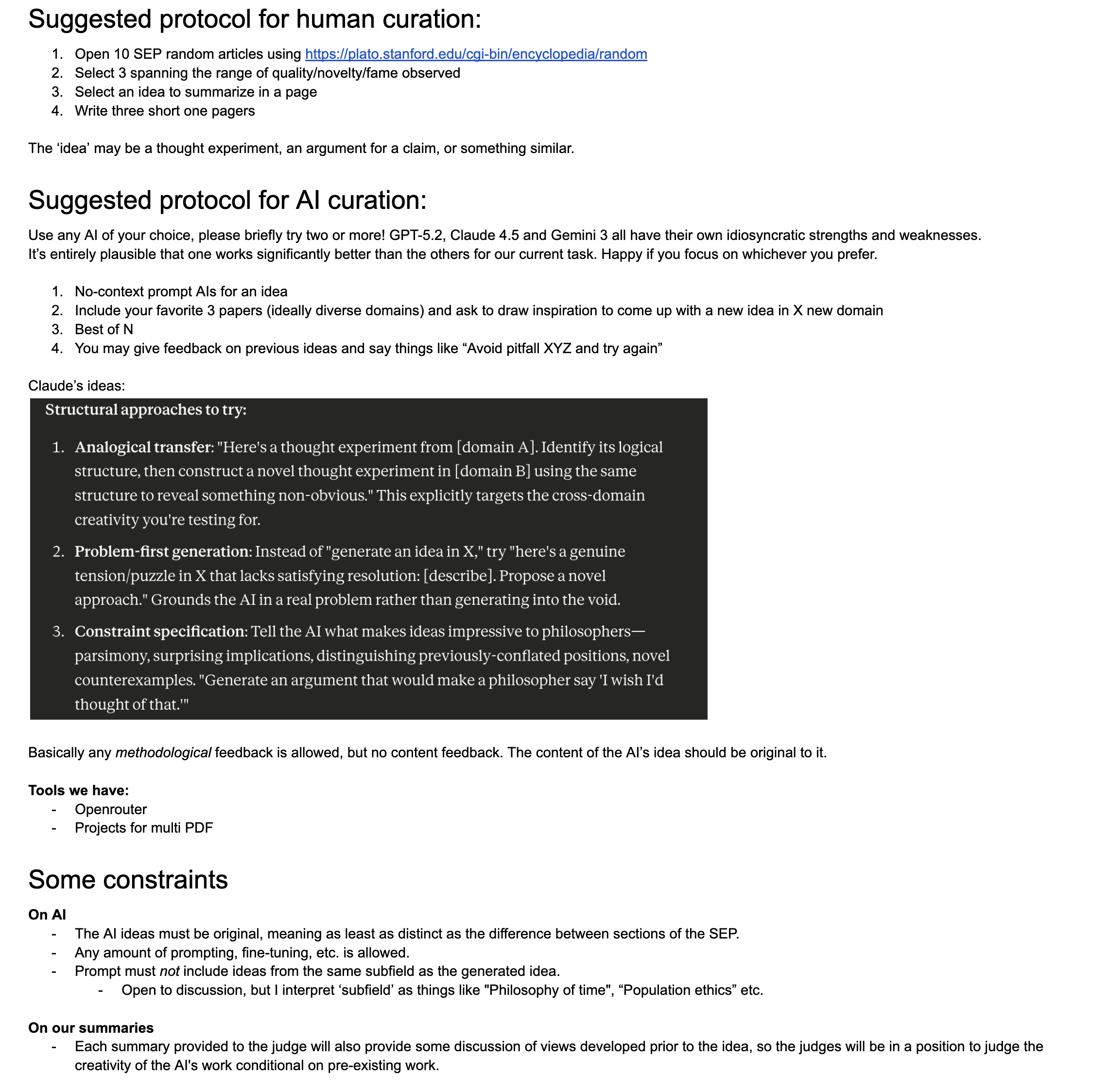

First, to scrappily model how the protocols works see GPT explanation below

One judge seeing 3 SEP ideas + 1 AI idea

If we lazily treat those 3 SEP ideas as independent draws from a "SEP idea quality" distribution, then the chance the AI idea is not last in the 4-way ranking is:

p_judge = 1 - (1 - p0)^3

At least 2 of 3 judges pass the AI

If we treat judges as roughly independent (they see different SEP ideas, and likely different or iterated AI ideas), then:

P(Yes | experiment run) = 3 p_judge^2 - 2 p_judge^3

Second, if we ask ourselves what ELO/model gen do we expect to roughly have SEP quality expectation and variance (to a casual read from an informed but non-expert reader)? This is something of a lower bound IMO, but let's say GPT-6.5 era. Then, we get something like +200 ELO over current models, that's 75% winrate over current models. Plugging into the above that's P(YES) outcome from protocol is 60%.

I think this calculation over estimates things, but 10% market odds seems wrong to me. In particular, I want to front run complaints about resolution which arise from people not understanding the noise involved in this market's resolution mechanism :)

Separately note that I've added to the market description

> Edit 19.11.25: I will stop trading on this market before I start running the human experiment. (Since I don't want this market's values to be distorted by people interpreting my betting as insider info)

A similar scenario with a lower bar for success: https://manifold.markets/mariopasquato/ai-generated-philosophy-book-worth?r=bWFyaW9wYXNxdWF0bw

It seem to me if you are doing the selection from the SEP yourself, you can easily stack the test in favour of the AI by picking uninspiring SEP posts (or you can introduce bias when writing the summary). Maybe you should ask each judge to pick and summarize the ideas for the next judge.

@Odoacre Sure that sounds like it would avoid the bias concern. If no one raises a compelling objection in the next day or two I’ll change the text to have judges write each other summaries.

How will it be determined whether the AI ideas are original? Will this be done before giving the ideas to the judges? I could easily see an AI coming up with something that has already been said before, but that some of the judges still rate higher than at least one of the original ideas, so the originality criterion makes a big difference to how likely this is.

@JosephNoonan My intent is that the judges will be unfamiliar with all of the ideas -- from above: "SEP posts which the judge is unfamiliar with".

The summary provided to the judges will also have some discussion of relevant positions developed prior to the idea presented. Besides some light initial filtering, it will be on the judges to evaluate how original the AI ideas are relative to prior work. I'll clarify this in the post.

@JosephNoonan added "If there is doubt as to whether the AI generated text meets this bar in 2025, I will run the test anyway. Each summary provided to the judge will also provide some discussion of views developed prior to the idea, so the judges will be in a position to judge the creativity of the AI's work conditional on pre-existing work."

@JacobPfau I am confused. What ideas count as "pre-existing work"?

Suppose there is some idea in the SEP that the judges have not seen before. Suppose the AI outputs the relevant SEP article verbatim. In this case, what will you show the judges as "pre-existing work"?

@Boklam Is it safe to assume you mean:

For ideas from the SEP, you show pre-existing work.

For ideas made up by AI, you show all work that has been done on the subject.

@Boklam In both cases, SEP and AI, I will include the most similar pieces of pre-existing work I can find. In the SEP case, this limits the timeline to pre-publication of the idea in question; in the AI case, this limits the timeline to pre- whenever the model output the idea (this is probably a trivial constraint).

@Boklam It’s a key question; I read the description as much closer to requiring face-value “originality” or plausibility rather than anything like mind-blowing innovativeness, but IDK.

@Boklam that would be a No resolution. On the other hand, I don’t consider this to require “mind-blowing innovativeness” eg the move from edt to cdt was not mind blowing; Kripkes ideas could be called mind blowing but I’ll include less novel SEP ideas as well. this question does require some degree of novelty and I doubt currently available models are capable of this TBC.

This is an interesting experiment and one that I think could pass currently with ChatGPT. If you consider that the an AI like ChatGPT is simply taking existing content on the web and (remixing?) reusing it, you could basically distil this thought process down to something like:

"Can I use a LLM to generate a piece of text from an existing concept that would fool someone with a philosophy degree that was unfamiliar with the concept".

You may even be able to get ChatGPT to regurgitate something very near the original text without modification.

@SamuelRichardson This may be doable well before 2026. I have added a couple details to clarify the level of originality needed.

@JacobPfau Hmm, this does change the criteria quite a bit. I think it would be very unusual for it to come up with a truly original idea.

@SamuelRichardson Selling my YES which was based on it being able to come up with a plausible explanation of an idea and buying NO as I don't think I've seen anything in the current state of LLM's that would provide novel thought.