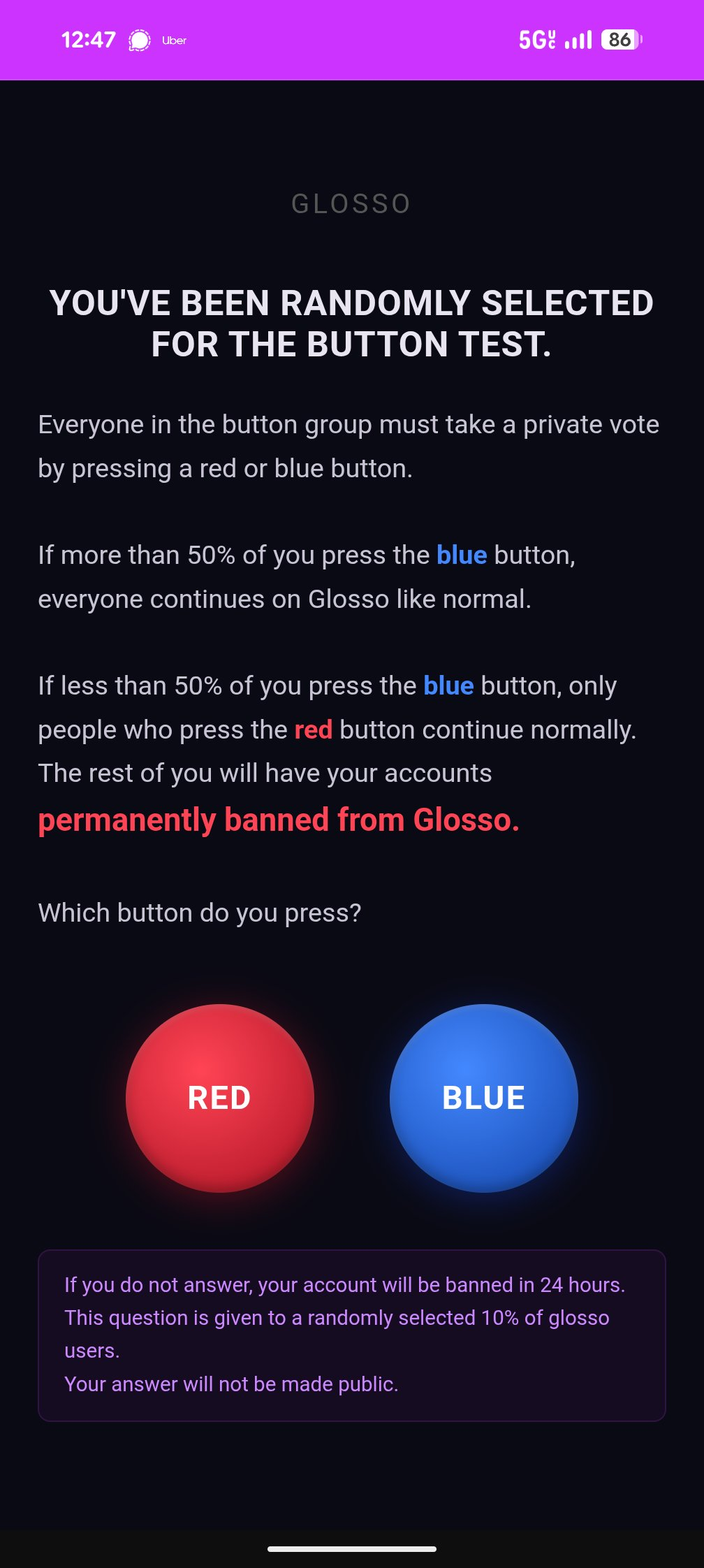

@Aella has a poll running on her poll-based social network site glosso.ink

( https://x.com/Aella_Girl/status/2048489793844355154 )

See this for the viral Tim Urban poll and a subsequent poll of the American public by David Shor:

Resolves to the answer that gets more votes when the result is revealed. Resolves N/A if the results are different than either majority "Red" or majority "Blue" (e.g. if a third option is added or if the rules are made more complex for some reason, which seems unlikely).

I will extend the timeline of this market if the vote is extended.

🏅 Top traders

| # | Trader | Total profit |

|---|---|---|

| 1 | Ṁ5,293 | |

| 2 | Ṁ4,717 | |

| 3 | Ṁ4,427 | |

| 4 | Ṁ4,138 | |

| 5 | Ṁ3,746 |

BREAKING: Blue wins with 51.2% when carried out in real life with life or death stakes!

https://thebsdetector.substack.com/p/we-carried-out-the-redblue-button?r=19iisj

@bens I've seen folks like @MachiNi and @equinoxhq arguing about whether these results would carry over to the actual life-or-death scenario. I think you'll both be interested in these findings.

@bens Uuuh... please clarify what you mean by "actual life or death stakes"... or if we're going to learn soon enough, fine.

I believe the results would carry over, but I don't think I've argued for that conclusion, it's based on my understanding of what people are generally like, and it's really hard to convince someone they're wrong about that, so I haven't tried.

@equinoxhq in the study, if <50% of individuals selected blue, then those selecting blue would be euthanized

@bens If 1,000 people who otherwise had healthy and potentially happy lives ahead of them so that they were representative of the population in respect to their answer to "would you like to die soon y/n?" signed up to conditionally maybe die for science under circumstances similar to the red-blue meme thing... then I think MachiNi might have a point about people being insane. I do not wish to be right that the result generalizes, while gaining that level of evidence that everyone is nuts. I mean, Litany of Tarski, I guess I do, but it would be a bad outcome.

But I expect the study participants are not representative of the population re: "would you like to die soon y/n?", or there's some other catch. Still some level of evidence, though, so, fun!

Essentially

only median vote matters

your P(median) = is likely 0% but say 1% of people are plausibly in the median

meh try this this again

Justification for picking blue

P(Exactly 50/50 without you) x EV[the 50% of people who picked blue] ≈ order of 1/N or possibly 1/√ N for true tossups, but given polling says blue is far ahead it’s probably closer to 0.

in other words there’s real value on blue if

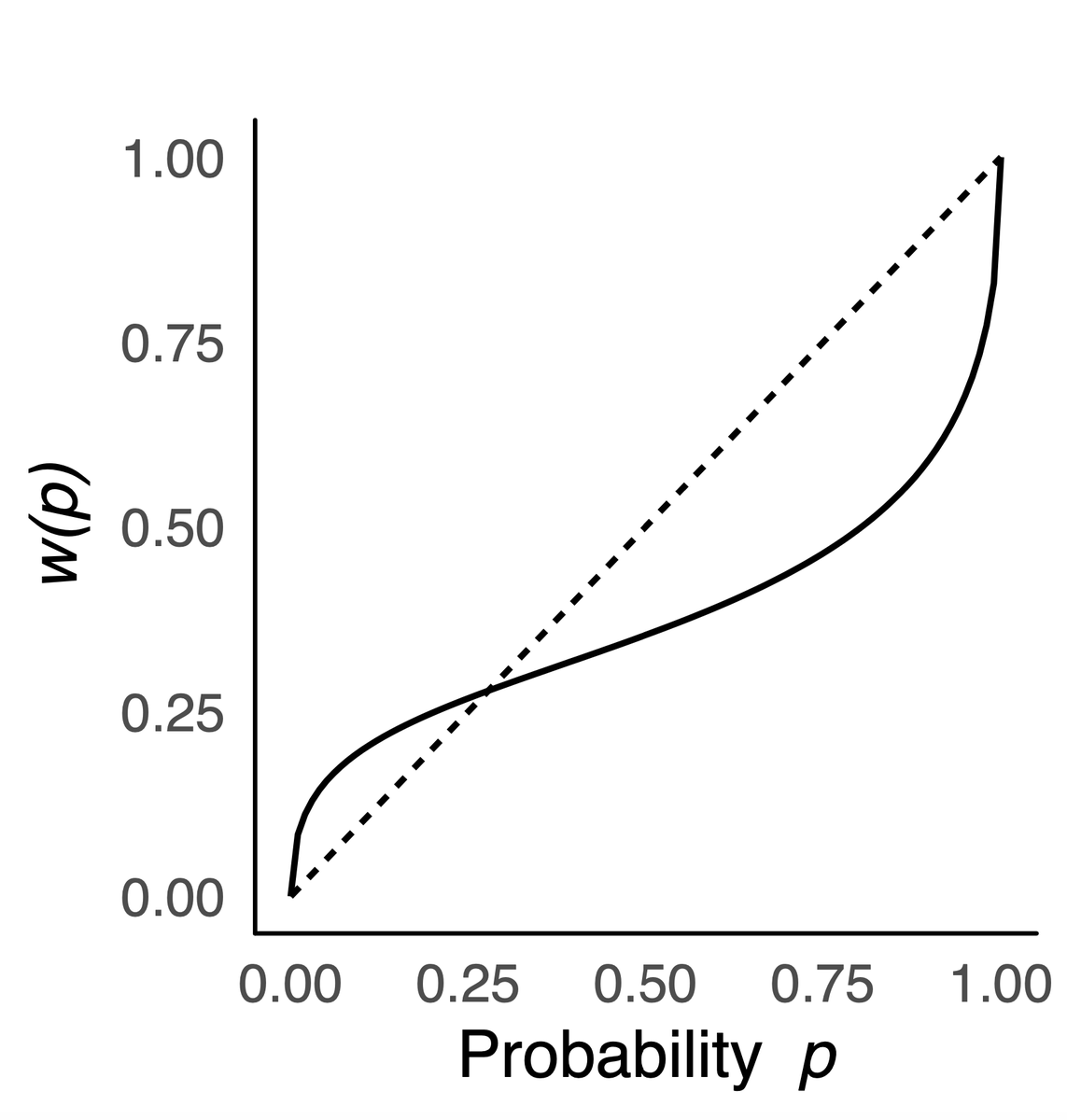

1. you have a bias that Kahneman discussed in Cumulative Prospect Theory of overweighting small probabilities (I think it’s called Possibility Effect)

2. The vote is truly uncertain and you’re selfless

vs.

Justification for picking red

P(Less than 50%) x EV[Yourself] ≈ 50% if believe in max entropy (the most interesting is most likely) but realistically closer to 0%

ok this is basically a simple ratio

P(<50%) ÷ P(Exactly 50%) x EV[Yourself] ÷ EV[the 50% of the population selecting Blue]

So Term 1 is clearly very large likely on the order of N or √ N

Term 2 is subjective, if you’re selfish it could easily be 1 or higher making red the obvious choice…

if you’re a utilitarian it’s clearly on the order of 1/N, and Blue is the obvious choice…

the interesting zone is for people who are in the middle on both terms

and think Term 1 is somewhere in the middle (allowing for collusion / group think that pushes expectations closer to 50/50) and value them selves more but not more than half of society combined e.g. on the order of √N times others

@ChinmayTheMathGuy for voting, and similar endeavors where you need many ppl to work together, there's a strong cooperation instinct that makes us each value our contribution very highly. Realistically one's chance of being the deciding vote is very low, but we know that reasoning like that would kill cooperation, so we just don't. Some try to rationalize this using Kant's categoretical imperative or Yudkowski's FDT. I don't think either of them are an exact match. But rational or not, it works, and it's an important part of human survival. This is what's motivating the Blue vote here. And once you know that Blue will probably win, joining the effort becomes rational as well.

Does anyone happen to know the motivation behind Glosso being intentionally grown slowly (hence the limited invite codes)? It seems like a site that is more effective the more users it has, so this risks it falling out of the online discourse spotlight before reaching the critical mass of regular users to be self-sustaining, right?

@TheAllMemeingEye not sure but I'd guess it's about solidifying:

tech infrastructure

community culture

workflow pipeline

and also providing early access rewards to subscribers as a loyalty bonus

@TheAllMemeingEye ofc the other way to think about critical mass is anticipation. it's a fine line that's easy to miss the mark on, but building FOMO and demand is highly useful for hitting that target

@TheAllMemeingEye scaling too fast could break infrastructure, also i wanna be quite careful about security/privacy stuff and i dont wanna raise the stakes on that too fast. also testing out the way people interact with features helps me slowly build a good site with invested users as opposed to spraying out a low quality site to a lot of less-invested users that may bounce off and thus get immunized to investing in it later

I haven't thought particularly hard about this, but perhaps a blue-presser can help me see their perspective. Presumably, the position is that pressing red is somehow bad, because you are contributing some amount of expected harm (i.e. the probability of your vote flipping the outcome x the badness of the flipped outcome). It seems to me, though, that even if that bad outcome obtains, you are not really morally culpable for it, given that everyone harmed willingly put themselves in that situation (or at least could reasonably be expected to understand as much).

Suppose I am driving a car. Someone chooses to jump in front of the car, knowing they could be injured. Another person jumps in to save them, also knowing they could be harmed. I have the choice to swerve or keep driving straight. If I swerve, I very well may crash and die. If I keep driving straight, the people who have jumped in front of my car may die (or they may not; it's impossible to tell for sure). It's not at all clear to me why I should be expected to swerve, given that they both chose to put themselves in danger.

@NBAP I don’t want bad things to happen to people. Pressing red increases the odds that something bad happens to someone.

Therefore, press blue. Trust that people can cooperate.

@JohnSmithb9be

Notably, pressing blue also increases the odds that something bad happens to someone (i.e. you).

I don't want bad things to happen to people either, but the personal risk that I am willing to entertain is influenced by (among other things):

1. The probability that taking on the risk will prevent people from having bad things happen to them.

2. The level of responsibility I have to keep the bad things from happening to those people.

Assuming that I am not exceptionally confident that blue will win (which perhaps I can be, having now seen popular sentiment, but I do not think that should be assumed in the thought experiment), it seems absurd to me that I should risk a potentially sizeable chance of dying in exchange for a fleetingly small chance (i.e. what are the odds that the margin is exactly 1 vote?) to save a bunch of people who willingly put themselves in that position to begin with.

@marvingardens I had two recent debates that I put a lot of thought into, on completely different topics:

1) The defensibility of ethical egoism over veganism (playing devil's advocate)

2) The scientific literature on the effects of egg consumption on blood lipids

Why do you ask?

@NBAP the only viable objection to Red I’ve heard so far is that children and mentally disabled people will press Blue through no fault of their own so to speak.

@NBAP on the point that the chance of the marhin being exactly 1 is very small- do you in fact refrain from voting in elections for this reason?

Also note that even that small probability scales like 1/N, while the number of people you'd potentially save scales like N, so it cancels.

@AhronMaline No, I often vote in elections. When I do, though, I am usually reasonably confident that I will not die as a direct result of doing so.

@NBAP true. but you will spend some amount of time waiting in line etc. Which is most likely a larger cost than the EV you get from your probability of being the single deciding vote.

I'm suggesting that we think of adding a vote to the pile as important and valuable, even if it's not the deciding vote. After all, if everyone thought that way...

@AhronMaline Perhaps, but I've no doubt that I wouldn't vote if it were not for my reasonably high confidence that I will not die as a direct result of doing so, and so I don't think the analogy is particularly informative with regard to the button thought experiment.

To be clear, I would be willing to press the blue button if it just involved walking to a polling station. But I'm absolutely not pushing the button without a reasonable degree of certainty that I won't die as a result.

the only viable objection to Red I’ve heard so far is that children and mentally disabled people will press Blue through no fault of their own so to speak.

I think I was the one that made that objection, so for clarity, although it's true that children and the mentally disabled may make poorly thought through choices, I don't think the people who are going to press blue are limited to them. Basically, given what I know of people, it's hard to get 80% of people (typically adults who can read a survey, which excludes children and the severely mentally disabled) to agree on anything. At various times I've heard things like "30% of US citizens are young earth creationists", so getting 80% to agree with basic, obvious, scientific facts is not guaranteed. As a nearly-absolute lower bound, there's the lizardman constant of around 4%. So, if "everyone" presses red, 1 in 25 people die. The general conclusion is: no matter whether your argument is correct or not, if your strategy relies on everyone agreeing with you and voting the same way in order for them to live, a lot of people are going to die. Because that is the case, you either accept those losses, or pick a different strategy.

@equinoxhq bracketing children and the mentally disabled (the only viable objection I’ve seen online, not just from you), one must be insane to press blue. You have no obligation to save people from playing Russian roulette.

@MachiNi So, you accept the losses, even if they're something like 20% or higher of the population, plausibly up to 50%-1, because they "must be insane". I mean, yes, that's a choice one can make....

My choice is "I don't care why people made a choice I disagree with, that shouldn't be a death sentence, and I'm not going to bucket everyone who didn't reach the same conclusion as me as 'insane'". So I vote blue, in favour of everyone living, regardless of which button they pressed.

@MachiNi Also, for clarity, so far blue tends to win in these polls. Is your position that the majority of the population is insane by your definition of that word?

@MachiNi Children, the mentally disabled, the clumsy, the mentally didn't-think-this-through, the mentally thought-this-through-differently-than-you, the mentally actually-interested-in-the-childen-angle-rather-than-ignoring-it... a world of madmen who aren't you!

@equinoxhq we’ve already established that you are virtuous. What shouldn’t be a death sentence for others shouldn’t be one for me. No one is morally let alone rationally required to risk their lives to save people from their poor decisions.

@MachiNi I do not claim that pressing blue is required, on any basis, of me, or you, or anyone. I claim that's what I (and a lot of people) would do. And through this dialogue, I now have a better understanding of why you would not.

@MachiNi I expect it sucks to live in a world where it seems to you that the majority of the people around you are insane. I have sometimes felt a little bit of that when I realize how many people are disconnected from reality on a particular issue. You have my sympathy. (My first way of phrasing this was "I'm sorry for you", but I want what I say to come across as genuine rather than snarky).

@equinoxhq thankfully talk is cheap and I’m confident people would not act as they say they would in those polls. Thanks for the sympathy.

PS: your view of morality reminded me of Alastair Norcross’s. It’s interesting (and plausible to me). Check it out: https://www.amazon.com/Morality-Degrees-Reasons-without-Demands/dp/0198844999?dplnkId=daa3deab-1de9-4ebc-8ced-ed6e7c3b186b&nodl=1

@MachiNi There are similarities (based on the paragraph on that page). I don't buy into the "no fundamental classification of actions as right or wrong" part, but I do think rightness and wrongness is scalar rather than binary, and I buy into consequentialism. I'm aware of decision theories other than consequentialism, and various problems with consequentialism, but I don't quite know what to make of the decision theory alternatives, don't claim to have a perfect understanding of the good that would remain the same if I got much smarter, and consequentialism serves me well enough for the time being. If "no fundamental classification of actions as right or wrong." means "moral antirealism" then... I don't have a strong position on moral realism/antirealism, but within the context of human society, morality seems real enough to be a useful concept, and I'm pretty sure where it comes from (backchaining from consequences and what led groups to cohere and work together successfully in past, leading to the development of certain instincts and conserved mental structures around things like fairness and Haidt's other moral foundations).

@MachiNi I percieve a conflict between "people are insane" and "people are virtue signaling while talk is cheap, but will do the sane thing regardless of their words if the situation calls for it".

Is there a way you resolve this conflict?

@equinoxhq people pressing blue in your scenario are insane. But most people are not insane. They say they’d do something insane but they won’t.

@MachiNi I do feel like "No one is morally let alone rationally required to risk their lives to save people from their poor decisions." answers "what about the rest?" adequately. 😂

Particularly combined with the fact you think pushing blue is ~certain death and anyone who thinks otherwise is insane. The crux of our disagreement is around how likely we think it is that one can safely rely upon altruistic behaviour from others.

There is a time in my life when I'd have pushed red, and thought it suicidal not to do so. It was not a happy time.

@MachiNi Do you remember what you were thinking when you wrote that most people I listed wouldn't press blue? What was the point of positing that? To try and absolve us of responsibility to them. But "most" doesn't accomplish that.

Some people will press blue, and it isn't because they're suicidal or insane or outside of the moral community. And some people will think about that group, and thus also press blue. And some people will think about that group, and thus also press blue. Etc.

Given that, what's the bigger reach: 51%, or 100%? You're not getting 100%, that's out of the question. Meanwhile, all the evidence in front of you points to 51% being achievable, and so you have to insist it couldn't be.

@marvingardens My point since the beginning has been that no one is required to risk their life to save others, and that the ‘evidence’ you’re talking about is extremely weak. In fact, I think it’s a misleading signal which gives people the false impression that their vote (pressing blue) has a non-negligible chance of making a difference. But it doesn’t. It is tragic if people press blue, but it doesn’t follow that I should risk my life to save them based on extremely uncertain evidence.

@MachiNi For the record, and I should have stated this more clearly much earlier, rather than leaving it to be inferred: while it is true that red is the button you'd press if you didn't care about people, that is not the only reason to press red. Lots of people will have many sound, valid reasons for believing that the people around them are nowhere close to nice enough to pull off a blue victory.

I might not mind much if some uncaring and selfish psychopaths die, and I think they'd understand and reciprocate that. But the many people who would push red simply because they believed it was their best option in a bad situation, should get our support. One way to tell the two groups apart is, red-pushers who care what happens to others will try to get everyone to push red, while the uncaring won't care. Unfortunately that puts the caring reds in conflict with those who are trying for a blue majority, but, that mirrors life, where two good groups of people can be in conflict because of differing beliefs about what will happen as a result of the actions they are advocating for.

@equinoxhq I’m not really interested in people’s internal reasons and how ‘caring’ this forced choice is meant to reveal them to be. But I’ll note that people will press blue for different reasons—not just because they’re caring or mentally incompetent but for funsies, out of a death wish, for symbolic reasons, due to peer pressure or mood affiliation or tribalism, or whatnot. This is not a test of altruism or cooperation and I don’t understand why people keep insist that it is.

This is not a test of altruism or cooperation

Hm. Disagree. Keeping in mind that every actual test (that I can think of, there might hypothetically be exceptions) has both a false-positive and false-negative rate. Some tests are really not good at differentiating true positives and true negatives, but still provide some signal, while others are "99% accurate". We could debate about how good this test is, but to say it isn't a test seems false. For one, psychopaths will press red at a much greater rate than they will press blue, and are the archetype of "not altruistic", so that provides at least some signal. Also, the people who think fairly shallowly "red is the 'in it for yourself' button, blue is the 'try and make everyone live, at some risk to yourself' button", and press blue without thinking any further, are going to be more altruistic than the people who think "but wait, is there a catch? Maaaybe I can justify pressing red while not bucketing myself in with the selfish" and then go looking and discover that yep, there's a case for red they didn't realize. Of course, that screens off smart people who see the structure of the problem immediately, but a lot of people are not that smart.

Also, I don't normally like nitpicking, but in this case it's important what exactly we mean by "altruism" or "cooperation". If we mean by "altruism" "the internal state of wanting to help others even sometimes at a cost to oneself", that's different from "the behaviour of helping others at a potential cost to oneself". If we mean by "cooperation" "playing cooperate in a prisoner's dilemma, but this problem doesn't have the same structure so that word doesn't apply to any actions in this context", that's different from "basically a synonym for altruistic behaviour".

This is definitely a test for altruistic behaviour (regardless of motive/internal state), but might misfire if you treat it as a test for internal state/thought process, for people who are altruistic by nature but won't be a sucker in their external behaviour, and also believe they'd get suckered in this case.

I expect what you were trying to express might be something like "In many real world situations, I would behave altruistically if it didn't carry too much risk of too great a harm, and this test ignored that and bucketed me in with the psychopaths, so it's a bad test." Is that about right? In which case, yes it did, and I can understand why you'd object.

I will also note, I like this test in some ways. It is my belief that there are a lot of people who are weakly altruistic by nature, but will not let that cause them to get screwed if that's obviously what's going to happen (and, because the world often sucks, there are a lot of people who think that's obviously what's going to happen) - and this test gives some signal about what people think is going to happen if they're nice when it counts. It's a muddled signal, but it's something.

Here's my actual belief about human nature, in case you're curious: We have prosocial instincts, but we also learn from experience and follow incentives, and experience and incentives mostly dominate.

So: Put someone in a situation where altruism is punished, and they'll learn from that and behave accordingly, and look to someone who has lived in an environment where altruism is reliably rewarded like a selfish defector. Put someone who has antisocial instincts or no such pro- or anti-social instincts in an environment where altruism is rewarded, and they'll mostly behave altruistically. So it's hard to tell what's nature and what's someone's environment. But I fundamentally think a lot of people would like to be nicer than they think they can afford to be, while some smaller number of people are restrained by incentives... but the signal is weak, and in any particular case it's going to look a lot like people are just following their incentives. And often, people who think they can't afford to be altruistic are right about what they can afford, and this influences how they behave in a way that makes it so the people around them also have fewer affordances, leading to a self-reinforcing system. Altruism while punishing defectors is also self-reinforcing. So you get bubbles of social groups with different equilibria, throughout society.

The biggest point against my "people have prosocial instincts" idea is how children behave towards each other. You can cherrypick and say babies instinctually help people in lab environments, but kids are also friggin awful to each other when they don't need to be, in a way that helps one understand where the book Lord of the Flies came from. A lot of times people will do what they can get away with, and we really do need to carefully set up systems where altruism is rewarded, or the results will not be good.

@MachiNi as a counterpoint to Lord of the Flies, there's this piece of nonfiction: https://www.amazon.ca/Paradise-Built-Hell-Extraordinary-Communities/dp/0143118072

I wouldn't call it a strong piece of rigorous research, but I've read nonfiction that did a worse job of pointing at reality, too, I'd say it's OK.

My point since the beginning has been that no one is required to risk their life to save others,

.

This is not a test of altruism or cooperation and I don’t understand why people keep insist that it is.

@marvingardens that’s right. If you see a tension here the problem is yours. Anyone insisting that somehow people are required or have overwhelming reason to sacrifice themselves are claiming this is a test of altruism. I’m saying it’s not.

@MachiNi Prompting you to take an entrenched position on whether or not people are required to sacrifice themselves for others makes this a test of altruism or cooperation. Your response makes it that. You taking that position makes it that.

I’m allergic to Solnit’s prose but this sure sounds a valiant effort.

Understood. If interested, I typed this prompt into Claude, and then had a brief follow-up conversation about what evidence there is re: human nature and altruism.

I have a colleague who I would like to understand the contents of Rebecca Solnit's book A Paradise Built in Hell (which I have read), but this person does not enjoy Solnit's prose, which I understand because a lot of her other work is much more activist/anticapitalist.

Could you review the key factual claims in the book, and the evidence for each, in a way that would read well to an educated and logical person who didn't share Solnit's viewpoint?

https://claude.ai/share/04d5dfe5-7151-4e91-8358-03cb4da8e829

Edit to add: It doesn't support my viewpoint as strongly as I'd thought (I last read the book pre-Covid), but I'm sharing it anyway because that seems like the right thing to do in this situation.

@MachiNi I'm "jumping in" (replying to notifications in my inbox because I was in this thread before you) to point out the thing I said: You said blue is crazy if you ignore the existence of certain classes of people, but there are simply more cases than just those classes -- and one of the cases is simply people who are not ignoring those classes, a recursively-growing class which explains the cooperation you have already generally observed in operationalizations of this problem.

Your response to this was to say that not everyone in my additional classes would press blue. This simply does not affect the point. The people who will press blue are a real number, and observable, which causes the recursively growing effect.

In response you keep repeating that you don't have a responsibility to anyone. As far as I can see in this thread, no one tried to tell you that you did.

If you keep putting things in my inbox there's a high risk that I keep putting things in yours. That's just how it is with this guy.

@marvingardens you didn’t start this thread and I never replied to you, but in any case this conversation is clearly hitting diminishing returns. I just don’t agree with your point about recursivity, because these polls are garbage, but so what? What exactly do you want me to tell you? That I would let you risk your life so you can make a point? Yes I would. Knock yourself out and think ill of me. When the test comes I’ll pick up the pieces and try to prevent the next psycho from giving us that absurd choice again.

I just don’t agree with your point about recursivity, because these polls are garbage, but so what?

The recursive character has nothing to do with the polls or the quality of any example. Here's the recursive character:

Some set of people will choose blue. Thinking of those people, some more people will choose blue. Thinking of those people, some more people will choose blue. Thinking of those people, some more people will choose blue. Etc.

It it a cooperative contagion.