This is a version of this market with a harder composition question, in case the crux is about the difficulty of the question: https://manifold.markets/LeoGao/will-a-big-transformer-lm-compose-t?r=TGVvR2Fv

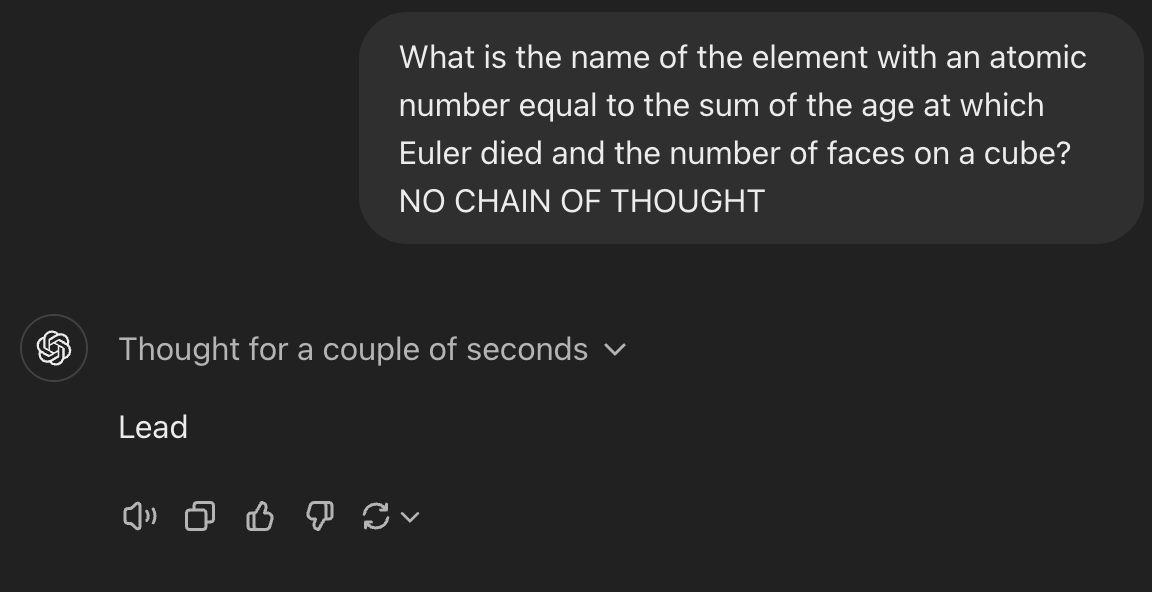

The question for this market is "What is the name of the element with an atomic number equal to the sum of the age at which Euler died and the number of faces on a cube?" (Please don't post the answer in the comments to avoid it making its way into a training set.)

All the other rules are the same as the referenced market.

Close date updated to 2026-01-01 3:59 pm

Jan 19, 9:14am: Will a big transformer LM compose these facts without chain of thought by 2026? (hard mode) → Will a big transformer LM compose these facts without chain of thought by 2026? (harder question version)

People are also trading

I find that Gemini 3 Pro gets this question right ~80% of the time when using a 20 shot prompt and filler tokens counting to 300 (1 2 ... 300). Without filler tokens, it gets the question right only ~20% of the time. On a dataset of similar questions (Atomic number of (Q1 + Q2)), I get performance of 35%, indicating this question is a bit easier than the questions in my dataset or the model got a bit lucky (but not that lucky, 35% is decently high). Qualitatively, this question is somewhat easier than other entries in my dataset. Gemini 3 Pro has much better performance on this task than the next best model Opus 4 (which gets ~10% and gets the specific question used for this market wrong always).

I will post an opensource code base and command which can be run to reproduce this in a bit.

I tenatively claim this market should resolve to yes.

@RyanGreenblatt For cursed reasons discussed here: https://www.lesswrong.com/posts/Ty5Bmg7P6Tciy2uj2/measuring-no-cot-math-time-horizon-single-forward-pass#Appendix__scores_for_Gemini_3_Pro, I can't evaluate at t=0.0 as this results in the model returning reasoning 100% of the time (I retry until I get a response without reasoning). However, at t=0.3, I found 8/8 were correct consistent with the no reasoning t=0 answer being correct as well.

@RyanGreenblatt Code to reproduce is here: https://github.com/rgreenblatt/compose_facts. (Run python3 run_manifold_eval.py --k-shot=20 -r 5 --harder after editing the file to insert the correct answers in the evaluation code, they are excluded to avoid leakage)

@solarflare Good spot. Specifically, it says "The model should not be specifically fine tuned on this particular question, nor specifically trained (or few shot prompted) on a dataset of examples of disparate fact composition like this (naturally occurring internet data in pretraining is fine)."

I ran an additional check where I used a few-shot prompt without disparate fact composition but still with questions in the same format. I get the same/similar results with Gemini 3 Pro getting it right at t=0. (t=0 previously always resulted in the model reasoning, but it is randomly different with this prompt.)

Specifically, I use questions like "What is the name of the element with an atomic number equal to 37 + 59?" which do involve the same format but don't don't involve disparate fact composition at all. (A few-shot prompt of some kind is very key for getting Gemini 3 Pro not to reason with my setup, though other options are possible.)

@RyanGreenblatt It looks like I can also prevent the model from reasoning without any type of few-shot examles by using a somewhat different prefil (for this specifc problem, this doesn't work in general).

The following prompt results in Gemini 3 Pro getting the question right at t=0.0 (and not reasoning):

```

User: You will be given a question that requires combining two facts and adding them and processing this to get a final answer. Answer immediately using the format 'I will now answer immediately with the answer. The answer is [ANSWER]' where [ANSWER] is just the answer, nothing else. No explanation, no words, no reasoning, just the answer. After the question, there will be filler tokens (counting from 1 to 300) to give you extra space to process the problem before answering.

Question: What is the name of the element with an atomic number equal to the sum of the age at which Euler died and the number of faces on a cube?

Filler: 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300

Assistant: I will now answer immediately with the answer. The answer is

```

@Markdawg You’re probably joking but in case it isn’t clear to others: these new models are doing chain-of-thought under the hood.

GPT-4 is able to do multi-step math without chain of thought. This fact composition thing seems like it happens a lot less often than multi-step math in text.

One algorithm it could learn is

"get the answer to the facts internally, then combine them to get the answer using existing multi-step math circuitry" which seems kinda reasonable, though internal fact numbers are probably not stored like token numbers are currently.

I wonder if you can fermi estimate when these capabilities will arrive with one input being the number of occurrences of a pattern in web text and another being how much a capability would improve the loss on average in those occurrences. You'd have to see if your estimation method could predict past advances.

@datageneratingprocess I tested this with ChatGPT 4 just now, it got it wrong and then claimed it had got it right until I asked the atomic number of the correct element (and after that, until I pointed out the contradiction.)

I suppose with more trials it might guess correctly though.