"mehr Schein als sein" = "more appearance than substance"

I saw Google's demo of Gemini (tweets linked below) and it looked amazing but overly rehearsed. I get that demos are used to sell a product. OpenAI Dall-E 3 demo was better than reality but it was still within limits. I wonder what I will think of Google Gemini model after getting a chance to use it.

I will resolve this market after using Gemini for at-least two weeks. I will be kind to Google and will only resolve this market as "Yes" if my experience doesn't match the stuff we saw over the past couple of days at all.

🏅 Top traders

| # | Trader | Total profit |

|---|---|---|

| 1 | Ṁ96 | |

| 2 | Ṁ37 | |

| 3 | Ṁ34 | |

| 4 | Ṁ33 | |

| 5 | Ṁ27 |

@traders i tried to use gemini but i just can’t bring myself to do it for two weeks - i think this should clearly resolve yes at this point given that most of what was shown was just demos that had nothing to do with reality. Any objections?

@traders i tried to use gemini but i just can’t bring myself to do it for two weeks - i think this should clearly resolve yes at this point given that most of what was shown was just demos that had nothing to do with reality. Any objections?

@Soli I don't object but fwiw I have been using it as my daily driver for the past couple months - at least up until gpt-4o, for the reason I found it to be roughly comparable to gpt-4 and better integrated into Google's other products.

@alexlitz interesting thank you for sharing - how would you rate its vision/audio capabilities? Have you seen the video where someone from google was drawing on a piece of paper while talking to gemini? Would you say this video is close to reality?

Also out of curiosity, do you use it for coding?

@Soli honestly haven't really used them much at all I will try out something like the demo this afternoon though.

@Soli and yes some for coding, also seems subjectivly to me relatively comparable. It does seem like for this question relative comparable to what was already out there could pretty reasonably qualify as "mehr Schein als sein"

@Soli I’ve been using Gemini for work and I’ve found it to be generally better than GPT-4 (mostly because it is a lot better at following instructions about how you want the answers formatted, which is key to using an LLM in a pipeline, while Gemini’s understanding and reasoning are pretty close). And for a small fraction of the cost: running our pipeline on Gemini costs about $0.02, while running it on GPT-4 costs $1.5.

For flashy use-cases that “advance the frontier of AGI”, or solving riddles, idk, maybe it didn’t pan out so well. But for real-life applications (I do AI in healthcare), Gemini has been paradigm-changing for us.

Gemini has been paradigm-changing for us.

Appreciate you sharing your experience. I am happy to hear that you had a positive experience with Gemini. I believe it is a state-of-the-art LLM with vision support, but Google promised a bit more with its demo, such as real-time vision/audio support. Also, I am very curious to hear more about how "Gemini has been paradigm-changing" for you. Can you be more specific with some of the details?

@Soli Seems like I’m late, sorry, I was travelling.

The main reasons are that it’s significantly cheaper (about 10-20x) than competitors, and significantly better at following commands, compared to GPT-4 or similar.

This means that we can put Gemini into a data pipeline, and rely on its output being high quality and consistently formatted. GPT-4 often tries to show how smart it is, so it won’t follow instructions as often. For example, if you say: “please only answer one of the following options”, GPT-4 will more often add a sentence, which breaks the pipeline if you rely on a consistent answer (or makes us have to account for these cases). Gemini is better “trained”.

GPT is like a smart kid with no job training, so it’s like “cool, but it would be expensive to use and a lot of work to wrangle it”, Gemini is an already-trained worker that is just as smart, and way cheaper. It makes it useable for a lot more applications, beyond just being a cool toy.

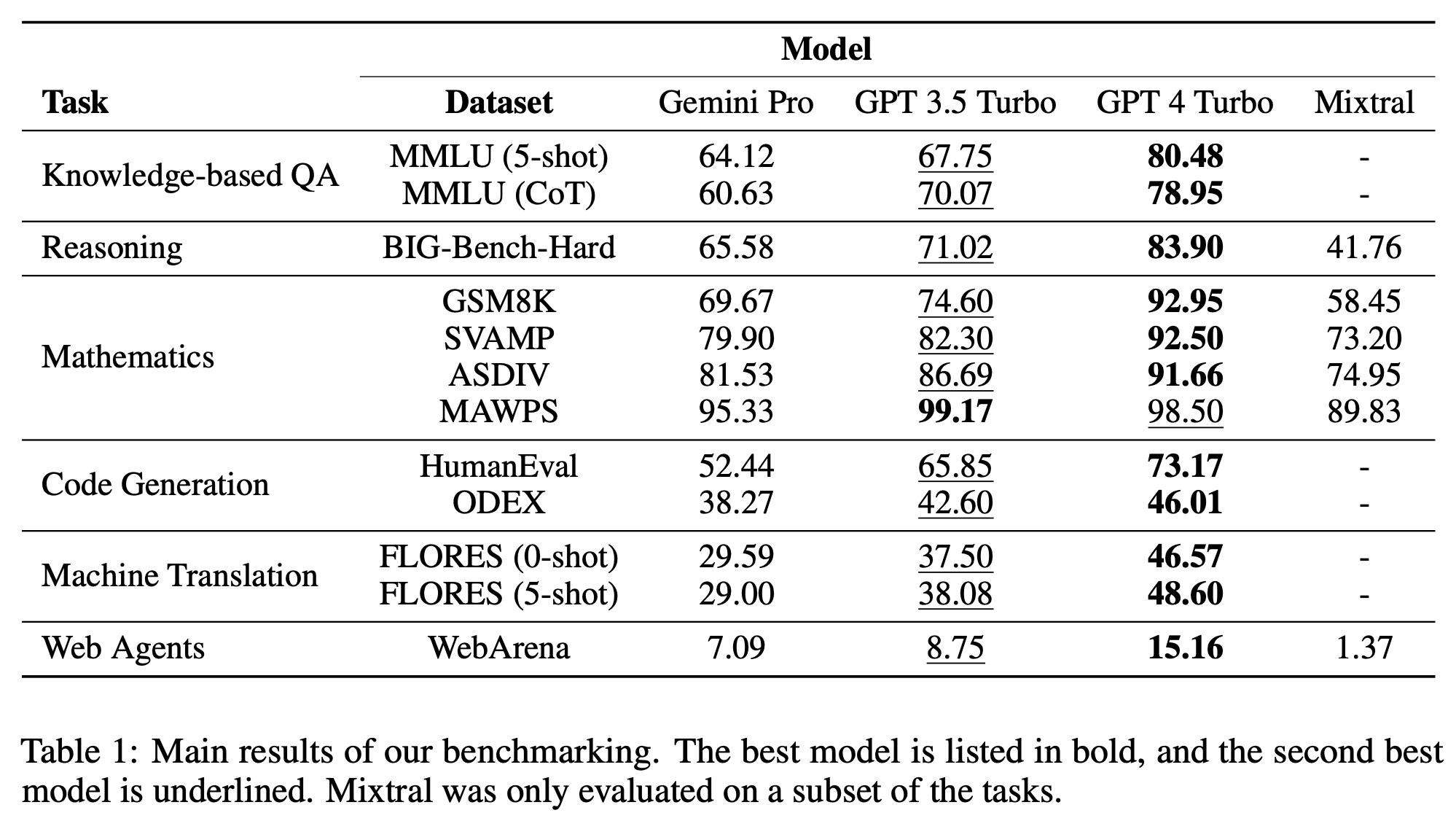

There's a market at 90% which says it will beat chatgpt 4 on benchmarks, but this is at 75% for "crap"/hype.

@StephanHeijl tried bard since I got an email saying it was now Gemini Pro. Not good. It seems to have minimal awareness of the context window so far and it doesn't read things you say to it objectively, it interprets them in favour of its prior assertions. It might do well on some types of benchmarks but then do badly as a conversational chatbot when the subject is complicated in any way. Hopefully it's because it's new.

@CalvinLoveland I will use the best version I can get my hands on. I am also willing to pay up to 20-25 EUR (same as ChatGPT).

@Soli the demos are presumably Ultra, but Ultra isn't available yet. So the best version you can get now is Pro, but that's not really the relevant comparison.

@chrisjbillington when do you think i will be able to use ultra? if its a couple of weeks then i think we can push the deadline of the market but if it is a couple of month then i think it is fair to use the worse model since in some ways it is misleading to demo something, say it will be available soon and then just release something else for months

@Soli Don't know, but markets here suggest unlikely this month, probably February or March, and unlikely to be later than that. That sounds reasonable to me.

I think this market becomes a completely different one if you test Pro instead of Ultra, as Pro is not even in the same weight class as Ultra, you might as well resolve YES right away without actually testing in that case.

So despite it being poor form to demo something and not released it for a bit, I don't think this question is particularly meaningful unless it's about Ultra.

@chrisjbillington ok i am fine with waiting for Ultra if participants of this market do not see a problem in this ✌🏼- maybe we can ping the top 5 yes holders?

@chrisjbillington i changed the title to include ultra let’s see if anyone complains in the next 48 hours orherwise we consider this change done

The demo, it seems like it's modalities include continuous video input, I saw after Dev Day someone make via the API's a similar feature, but Gemini (Ultra?) has this from day 1? If so I think that will impress you, even if it's not as impressive in actual use compared to the demo, I think you'll come down on substance.

@VAPOR the demo was faked, in that they actually just took screenshots from the videos and provided those to Gemini Ultra along with very specific prompts