Preface:

Please read the preface for this type of market and other similar third-party validated AI markets here.

Third-Party Validated, Predictive Markets: AI Theme

Market Description

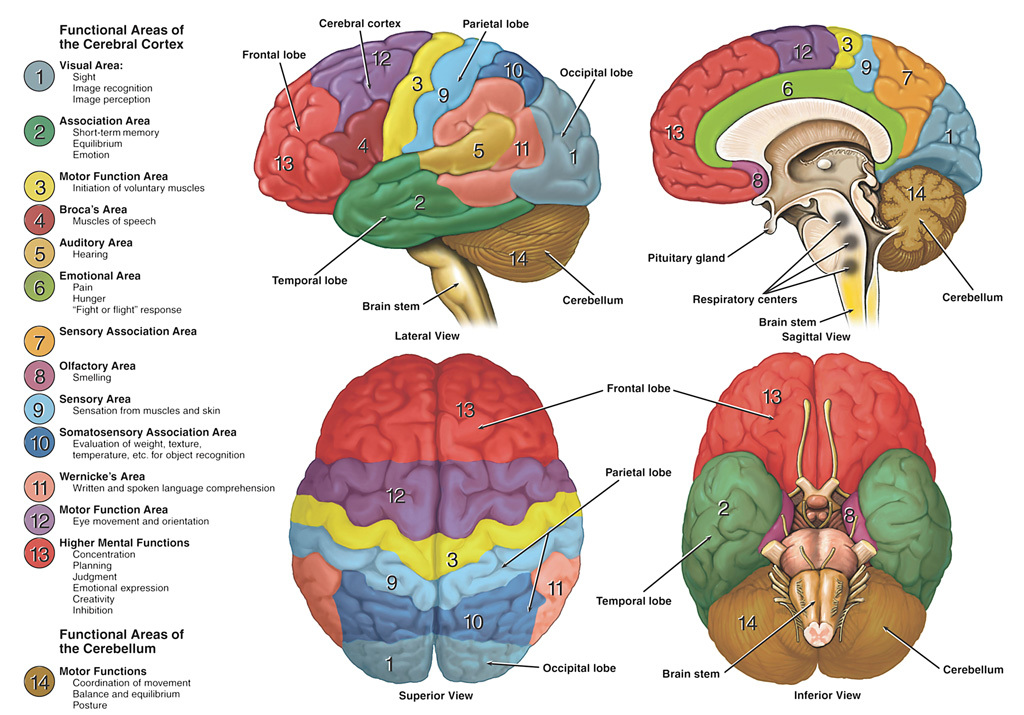

The temporal lobe (6) is an area of the brain which has been identified as being associated with Pain, Hunger, Flight or Flight Response. As far as I'm aware at the time of authoring this, there hasn't been a lot of recent, concentrated research into AI that can actively, "feel pain," and either signal a pain response on the simple end, or react and attempt to self repair on the more advanced end.

Obviously this is a capability which is likely already built into a lot of machines, e.g., your cell phone operating in low power mode when the battery goes low. But given the hightened interest in A.I., one wonders if some researchers are going to delve into this topic more closely?

Market Resolution Threshold

This is a bit of a trickier one to start off with, as the author might just be ignorant on the topic so it might be more difficult for me to just draw a hard line at the onset.

However, I think it's better to find a third-party source with a discrete score that we can more or less agree upon and use that as a market resolution criteria. For the first six months to a year of this market, we should work collaboratively to find a measurement criteria which is reliable but puts us more on the blind side of the outcome.

After that point, a numerical threshold will be chosen and that will be used to settle the resolution.

Typically I use about 1.3*X where X is the original score at the time of market creation as the definition of, "Significantly better," but the factor of 1.3 might need to be higher or lower, depending upon the scoring system used, and how we can land on more of a 50/50 rather than a sure thing.

Should no numerical threhold be found, this bet will resolve N/A, potentially sooner than the market resolution date so as not to tie up people's capital further.

People are also trading

Able to feel and react to pain

Vs

Able to understand and signal its expected response to pain

Pain in biological organisms evolved as a signal mechanism and we evolved through evolutionary layers one of which being the competition or often even "survival of the fittest" type systems where pain is helpful and provides meaningful information.

We hand select/craft current ai systems and the evolution/selection through competition follows a criteria of benchmark performance, revenue growth, etc etc (the Deep learning ensembles then scaled transformers with funky optimisations win currently but is there a DIRECT feedback back? The current selection seems to be by proxy) which don't have "pain" as i understand it to be a part of the feedback loop

@firstuserhere oh i just wrote out as i thought. If it doesn't make sense, feel free to ask me to reformat and structure.