I know, it's seems extremely unlikely. But there is a person on Twitter claiming this and their tweets don’t trigger my personal “this person is obviously a scammer” or “this person is obviously crazy” alarms (maybe I’m too naive).

Their claim is that there’s a stealth startup working on a new neural network architecture 100x-1000x better than transformers in terms of speed on existing hardware (by speed I mean the amount of tokens generated per second). They will give public access to it in the same way OpenAI did with ChatGPT, and more details will be available in about two months. There are other claims as well, but let’s focus on these.

Sources:

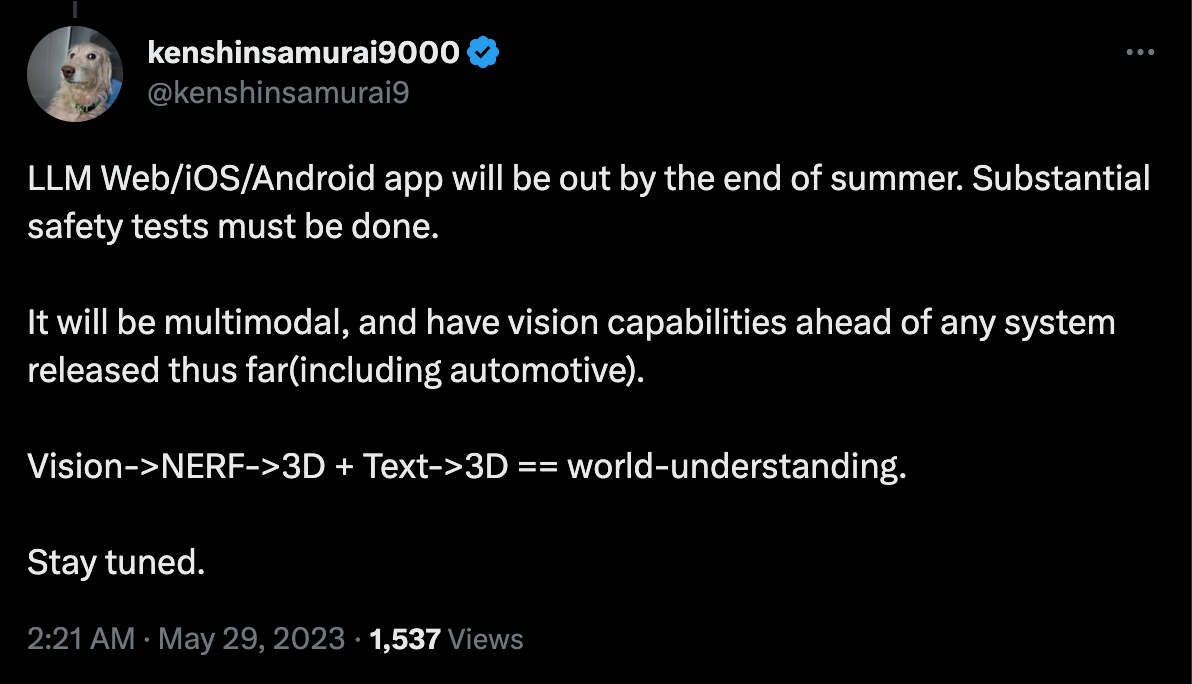

https://twitter.com/kenshinsamurai9/status/1628056046013915137

https://twitter.com/kenshinsamurai9/status/1628084013557592094

So this market will resolve YES if before June 1st, 2023 this startup goes out of stealth mode: some kind of public announcement is made and 1) it mentions at least 100x improvement in speed in comparison to transformers and 2) they position themselves as competitors to GPT-4 or any other state-of-the-art LLM at the time of announcement, and that claim should at least be somewhat reasonable based on the evidence provided (I'll be the judge on this).

I’d make the market more technical, but I don’t think I will be able to verify it, because I don’t expect that we’ll get any kind of access to such a model besides chat interface any time soon.

I'd bet YES just because the possibility is too exciting. But my confidence level is low of course.

🏅 Top traders

| # | Trader | Total profit |

|---|---|---|

| 1 | Ṁ271 | |

| 2 | Ṁ249 | |

| 3 | Ṁ202 | |

| 4 | Ṁ76 | |

| 5 | Ṁ26 |

People are also trading

@B I think he is referring to any of the popular benchmarks for LLMs. Perfect performance would be getting 100% on them.

@BairAiushin oh hmm i see. Maybe less of a red flag then but (not knowing the benchmarks) that still sounds very ambitious!

@B it is very ambitious indeed. But AI will get there, the questions is when. Seems quite unlikely that it will happen in 3 months.

I think it's plausible that an architecture 100X faster than transformers will be unveiled, but not from this stealth startup. It might be good to update the title to reflect this?

EG "Will the stealth startup referred to by this twitter user make available a SOTA-competitive LM 100x faster than transformers before June 1st, 2023?"

@NoaNabeshima fair point. But I don't think the probability of someone else coming up with superfast new architecture in 3 months is high enough to be a good reason for making the title this long :)

@BairAiushin I mean suppose that someone overtrains a pretty small mixture of experts model. That on its own might be sufficient.

@NoaNabeshima I doubt it. Anyway, what you're talking about will not resolve this market to YES, so your NO bet is safe :)

@NoaNabeshima if many people insist, I can make it match. I'm not sure people who already made their bets will like it though.

@BairAiushin But if both titles have the same resolution conditions, why would they not like it?