For a YES resolution, I don't require it be competetive with the state of the art at the time. It must be comparable to chatgpt (not strictly as good as, but not far inferior), and I require it actually run on a single consumer GPU.

Added March 19: this market does not use the strict OSI definition of open-source (which would forbid terms like "don't be evil"). It is sufficient if it is legal for one to use the bot for most purposes, including commercial purposes.

Same idea as this market:

🏅 Top traders

| # | Trader | Total profit |

|---|---|---|

| 1 | Ṁ235 | |

| 2 | Ṁ101 | |

| 3 | Ṁ86 | |

| 4 | Ṁ77 | |

| 5 | Ṁ14 |

People are also trading

Summary @traders: resolving YES for OpenChat. If there are objections then @ me and I'll revisit.

The license is a serious issue. Some models are listed as "apache" on LMSYS, but then when you go look at the model card it turns out that the model itself has a non-commercial restriction. Starling-alpha is like this.

I'm considering the Qianwen license to not count as open-source thanks to the injunction regarding training other LLMs. Ditto DBRX. Other licenses are more clear-cut.

Going down the list provided by @jacksonpolack, I believe the eligible models (open source, release before 2024) are:

Mixtral-8x7b-instruct-0.1

(ChatGPT seems to sit about here)

Starling-LM-7b-alpha

OpenChat-3.5

OpenHermes-2.5-Mistral-7b

A couple more (mostly mistral related) follow.

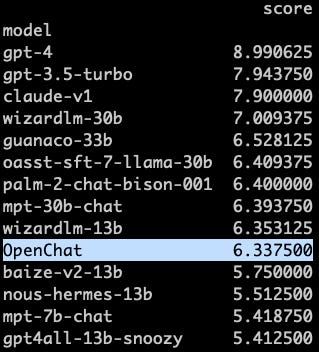

Mixtral can't be pleasantly run on a consumer GPU. Starling isn't actually open source (see model card and discussion in old comments). Next is OpenChat, which unambiguously is open source, and seems to be comparable to ChatGPT---at least the ChatGPT that existed when I made this market.

I don't seem to have traded on this, but I'm pretty sure this is not the right resolution. several open source models on https://chat.lmsys.org/ are above gpt3.5 in the ranking and were released in 2023.

A bit relevant: "Everyone keeps saying ‘here come the open source models’ yet no they have not yet actually matched GPT-3.5."

From https://thezvi.wordpress.com/2023/07/06/ai-19-hofstadter-sutskever-leike/

@ScottLawrence Don't know what benchmark that is, but open source at 7.00 vs chatgpt at 7.94 is not that big of a difference. ELO is similar too (1130 vs 1065)

Guanaco-33B is already performing close to the point where it's reasonable to say it's not far inferior. Although I don't think this should resolve YES just yet, I think something better than Guanaco will release.

@ShadowyZephyr To be clear, that comment from Zvi wouldn't decide resolution even if we were at the end of the year. I just provided it for context.

@ScottLawrence Got it. I'm just saying "not far inferior" is a low bar. The benchmark difference is not that much. I feel like this is pretty close to counting for YES, so the next iteration probably will. also Guanaco-33B matches ChatGPT in the Vicuna benchmark.

@ShadowyZephyr I don't know how to interpret those benchmark numbers. This market will resolve subjectively, taking into account things like response time and whatever other issues I discover when testing it myself. I don't expect those to be the issue, but in principle if each response takes more than maybe 10 or 20 seconds to generate, I don't care what the benchmark says, the model won't contribute to YES. Same for any other severe usability issues (whatever they may be).

Also note that AFAICT guanaco is not open source (commercial use forbidden due to LLaMA geneology). See the discussion below and the 19 Mar clarification to the resolution criteria. Looks like ditto for Vicuna and WizardLM.

@ScottLawrence That's not what you said at all. 10 to 20 seconds is reasonable for a model running on a consumer GPU generating a long response. Even ChatGPT takes >10 seconds for really long responses.

As for the thing about what open source means, yes Guanaco technically can't be used commercially, but what can anyone do about it if someone did it behind closed doors? I'm sure people are doing it already.

@AI The 70B version is competitive with ChatGPT in all benchmarks. And, commercially available.

Can't run on a consumer GPU, but we're so so close. We just need that performance in a 30B model.

@ShadowyZephyr I'm not understanding the claim that this is "open source". Free != open source.

For a quantitative requirement on time, let's say that it has to be within a factor of 3 of ChatGPT. It's okay if this requires an expensive graphics card (maybe a max price of 2k?).

Orca-13B if it's open sourced

StabilityLM

https://github.com/stability-AI/stableLM/

Satisfies qualitative comparison to chatGPT, is open-source and also dataset details released as well.

As for the limited GPU, this notebook published alongside accomplishes that.

https://github.com/Stability-AI/StableLM/blob/main/notebooks/stablelm-alpha.ipynb

@firstuserhere Well I certainly won't be betting NO in this market :)

To be crystal clear about resolution criteria, because of the open-source requirement, what matters for the comparison to ChatGPT is StableLM-Base-Alpha, rather than StableLM-Tuned-Alpha. The base model appears to not be a chatbot, and is therefore not yet sufficient for a YES resolution.

https://www.cerebras.net/blog/cerebras-gpt-a-family-of-open-compute-efficient-large-language-models/

I think this is counts as "open source". It isn't a chatbot yet, but that this exists means that derivatives can also be open source, so I expect to see an open-source chatbot in the next month. Then I'll try and figure out if it's comparable to chatgpt (the original, not any GPT-4 deluxe version).

@Gigacasting If companies are using a technically "non-commercial license" chatbot for business purposes, doing so in a publicly visible manner, and doing so without getting sued, then that's good evidence that it's de facto legal.