Was the reason Ilya Sutskever supported the ouster of Sam Altman in November 2023 due to an AI capabilities advance that he either witnessed or anticipated arriving soon?

I will resolve to my best guess in 2025 (preponderance of evidence standard, incorporating non-public info I hear from OpenAI employees —or to N/A if I have no idea or if the framing of this question is wrong).

Evidence against Ilya having been spooked by a capabilities advance:

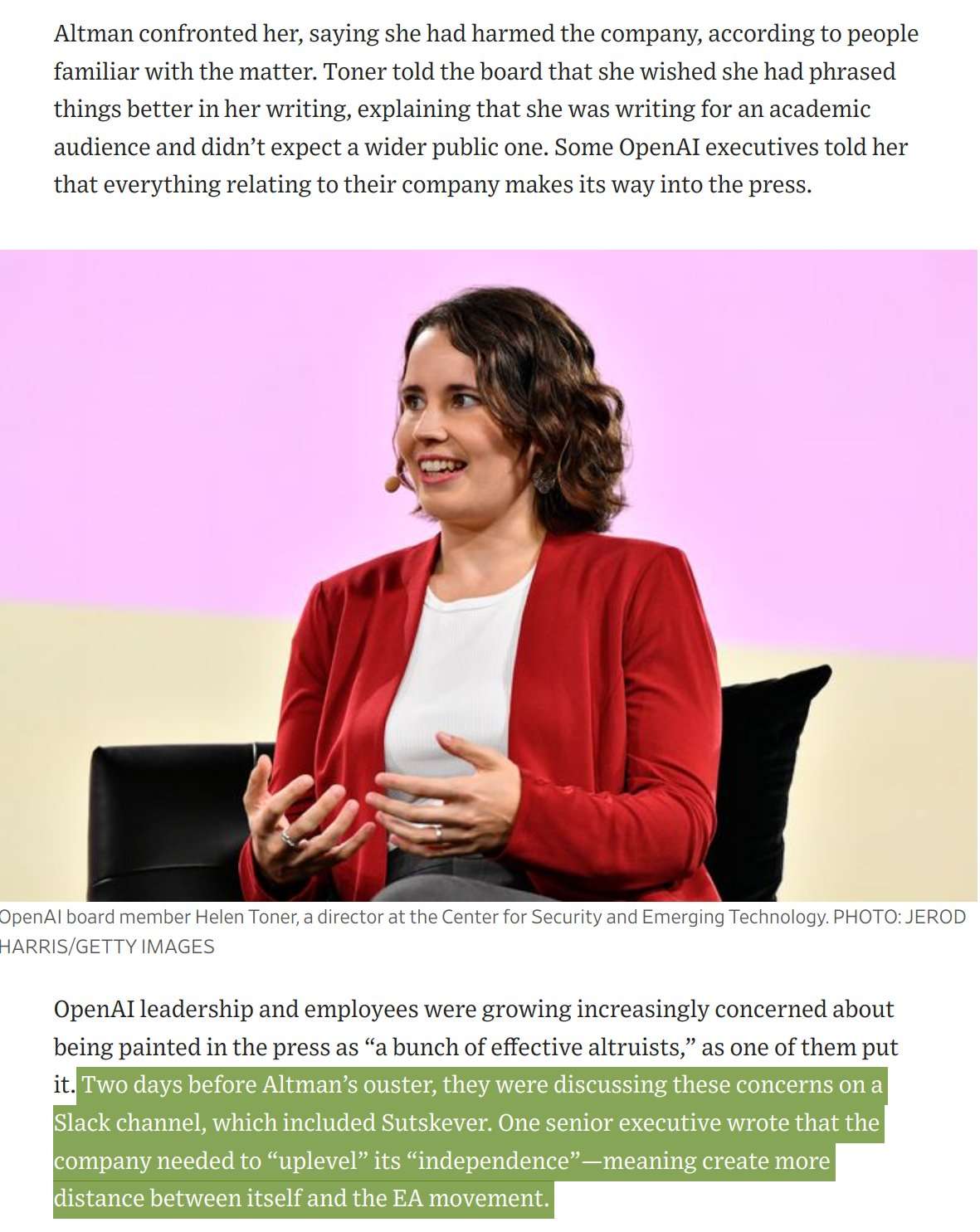

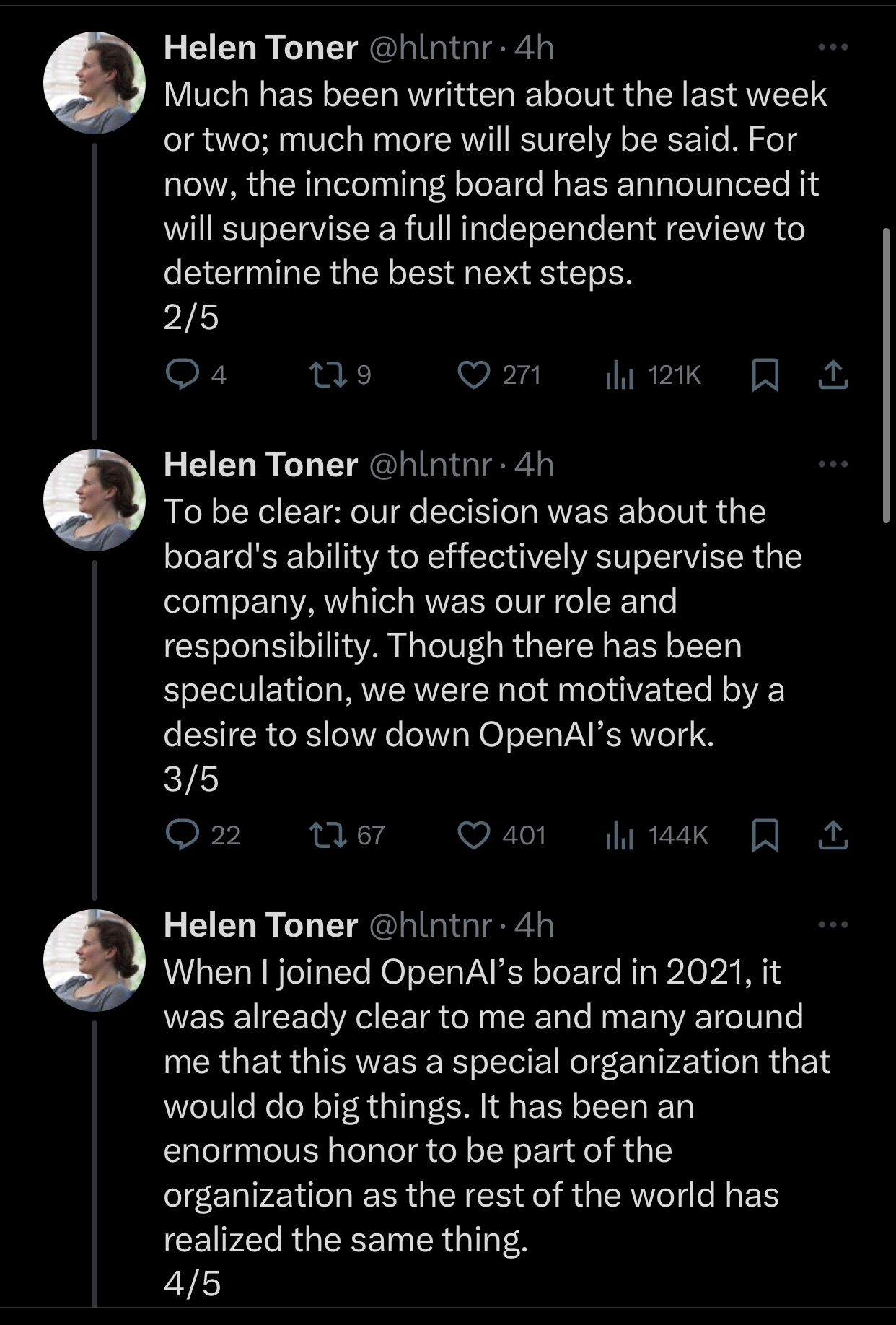

In Helen Toner's board resignation tweet she says "To be clear: our decision was about the board's ability to effectively supervise the company, which was our role and responsibility. Though there has been speculation, we were not motivated by a desire to slow down OpenAI's work."

Here's a link to the tweet https://x.com/hlntnr/status/1730034017435586920?s=20

An OpenAI spokesperson didn’t comment on Q* but told me that the researchers’ concerns did not precipitate the board’s actions. Two people familiar with the project, who asked to remain anonymous for fear of repercussions, confirmed to me that OpenAI has indeed been working on the algorithm and has applied it to math problems. But contrary to the worries of some of their colleagues, they expressed skepticism that this could have been considered a breakthrough awesome enough to provoke existential dread. Their doubt highlights one thing that has long been true in AI research: AI advances tend to be highly subjective the moment they happen. It takes a long time for consensus to form about whether a particular algorithm or piece of research was in fact a breakthrough, as more researchers build upon and bear out how replicable, effective, and broadly applicable the idea is.

Given such strong favoring of talking about Q^* over more evidenced possibilities, I am still more convinced Q^* is entirely fabricated. A perfect distraction! Insiders, though smart enough to realize its probably fake, take it as reason to further their pet theories, and MSM loves the story of a major capabilities advance leading to such corporate drama.

@SemioticRivalry Please explain how a coup could occur with a 3-3 board where Ilya is one of the 3 originally on Altman/Brockman's side. Also, why the board would remain silent if the reason was simply an attempt to maintain independence of the board, which is highly legible and understandable given their charter? Happy to be wrong here.

My current best guess would be that the board didn't want to say why they got rid of Sam because it would ignite a giant public chain of "No mom, they started it!"

The board made a move against sam because sam made a move against the board because the board made a move against sam because-...

@KevinCornea See this comment and the parent thread: https://www.lesswrong.com/posts/KXHMCH7wCxrvKsJyn/openai-facts-from-a-weekend?commentId=E7rEuPdmsSKSYWnPz

@adk Interesting, but some problems as noted by others:

seems to take for granted that those particular leaks are accurate but not others relating to Q*. why? it does not seem obvious to me why you would automatically place significant weight on any particular one, or on any of them at all

there is an assumption of board members not being able to vote for their own ouster, which as Zvi notes seems kind of crazy and probably more importantly would have made a deadlocked board unsustainable (they've had these same 6 board members for months)

the explanation for complete silence by the board is essentially legal reasons - this is an American company, board members are protected by the first amendment. any accusations of slander/defamation would have an extremely high bar to pass. the complete silence on reasoning by the board is super weird, and is not easily explained by "legal reasons"

@Joshua I was interpreting it as needing to be decisive on the margin, so if he had not been spooked then he would not have supported firing Sam as CEO.

@MartinRandall I would still bet no at that criteria, but yeah that is quite different. I think the main reason he flipped was because of general corporate politics and Sam having tried to oust Toner. I think he'd sitll have flpped without Q*. But I'm especially certain he didn't flip primarily bc of Q*.

If Ilya had serious safety concerns, I don't think he'd have flipped /back/ to Sam's side. He'd have held his ground.

@Joshua but why act so suddenly? it's clear they had no ready-to-go interim CEO - they picked Mira who immediately flipped to Sam's side. why not wait if it was just over politics/Sam trying to oust Toner, which has no immediate deadline in a deadlocked 3-3 board?

@KevinCornea We've got no information about when the letter was supposedly sent to the board, even from the anonymous sorces. It could have been sent months ago.

@Joshua Ok, but I meant in general with using your logic, if the reason for the ouster was "general corporate politics and Sam having tried to oust Toner", that gives no reason for why the board would have acted so suddenly and rashly. Not having a hand-picked interim CEO waiting in the wings indicates either gross incompetence/negligence or a legitimate reason to act suddenly. A theory that claims that Ilya (perhaps the most prolific and well-respected ML researcher of this generation) is incompetent seems like a questionable theory.

@Joshua "no comment on accuracy" from the official source, and "no letter...no breakthrough" from the anonymous one...

hmm

@KevinCornea Also worth nothing that the report in "Theinformation" said that Illya was the one who developed Q*. Makes no sense that the board would be informed of in through a letter, or that Illya would get spooked by his own project.

Meanwhile, Reuters is retracting the claim that the letter contributed to Sam's firing.

@Joshua Source for Reuters retraction? I still see it on their front page .

According to Nathan Labenz, the non-OAI employees of the board were not familiar with GPT4 for 2+ months post its development. It seems plausible that Ilya knew but Adam/Helen/Tasha did not. Also, Ilya could have gotten spooked by the recent results of the training run, not the existence of the project itself.

I think there is a lot that is still murky here. But the thing that made this interesting was the specific claim that Mira said the letter was a reason for firing sam. Retracting that makes the whole story much much less interesting, and not very important to this market.

@Joshua I'm suspicious of The Independent as an objective source here, and of Reuters' ability to tell fact from fiction in this domain.

@Joshua Sam previously said that AGI would need to use more than just LLM's, a model that can do math because it uses external modules that do math would not be groundbreaking in itself, it would just be like how they're merging the LLM with plugins for example, they've already done this and a math module wouldn't be big news, and neither would an llm that can now do basic math, it doesn't unlock anything major, AGI is more like a platform, that unifies many modules at once, with real fluid ability to mix them reliably at low cost, even if they achieved this internally now, it's a long time away from release by their standards, they will need to work at it for a year or two