⭐ CLICK HERE TO FILL OUT THE SURVEY

I'm running the survey above. To qualify, you need to be a Manifold user with an account that was registered before Feb 22nd, 2025 and made at least 10 bets.

In the survey, respondents are asked: "Imagine you are trying to predict a complex global event happening 5 years from now. Imagine you have 1 hour to come up with a prediction and you cannot use the internet.

For each technique, please indicate how useful you would find it in this exercise."

Then, they are asked to rank the techniques from 1 (not useful at all) to 5 (extremely useful). They are randomly ordered (except for the final questions 12-15). I will look at the users' Brier scores using the calibration app (thanks, sufftea!). Note that the Brier score doesn't measure calibration but the distance of negative bets to zero and positive bets to 1 - therefore low is good.

All techniques where the usefulness will negatively correlate with the user's Brier score so that the Pearson's coefficient is at least 0.2 will resolve YES. Correlations lower than 0.2 will resolve NO. For illustration, that's e.g. the correlation between male gender and enjoyment of danger.

The survey will stop gathering responses by the resolution date (March 8).

If there's > 100 respondents, I might randomly select 100 users that will form the sample.

I will not bet on this market. I reserve the right to remove suspicious responses.

🏅 Top traders

| # | Trader | Total profit |

|---|---|---|

| 1 | Ṁ822 | |

| 2 | Ṁ103 | |

| 3 | Ṁ98 | |

| 4 | Ṁ81 | |

| 5 | Ṁ80 |

@copiumarc Yeah the market was way overpriced, I bet no on most options semi-blindly and got +100% profit.

@ProjectVictory I should've allocated more mana to this market but bet it all on a market where I'm probably gonna be wrong sob

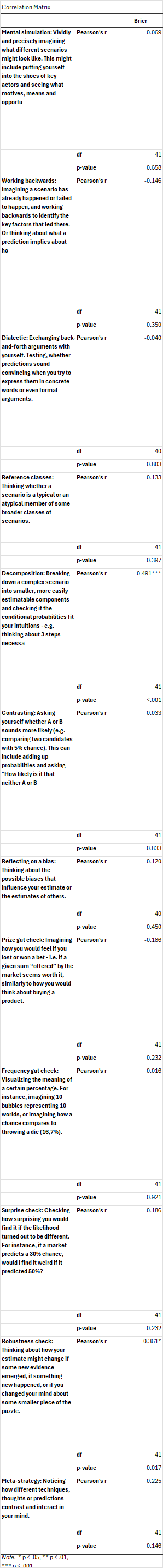

Thank you to all the traders and 43 respondents! Here are the correlations (as mentioned, low Brier score = good forecasting). Thanks to some great points by @Ziddletwix , I'll analyse the data further in an upcoming post, follow my EAF for updates.

@SG - Manifold REALLY needs to respect user preference when it comes to launching an external browser from the Android app. I just filled the survey out, switched away to copy my username, tried to switch back and discovered the window had been destroyed.

I'll share my answer to strategies you missed publicly, those are the strategies I use a lot:

Checking historical data: "how often did X happened in the past?"

Accounting for manifold bias: how likely are the other traders to be biased towards certain answers? For example manifold is left leaning politically and optimistic about AI progress.

Betting against good/interesting outcomes: if you loose you at least get a notification of a cool thing happening. Also, unfortunately, surprisingly profitable, probably due to manifold bias.

@ProjectVictory I'd categorize those as a) reference classes b,c) reflecting on bias - but thank you

@hominidan Yes, this kinda fits the categories, but while the last one is kinda reflecting on bias, it's intentionally being biased, not trying to avoid it.

this is a very cool idea but I feel obligated to say that brier score is basically totally irrelevant to being good at prediction markets!

Betting perfectly on the right side of every single 50% market (amazing!) tanks your brier score, while betting based on a coinflip for each 95% market improves it. The quick TL;DR is that most market prices are ~roughly calibrated(-ish), in which case brier score is just a function of “did you bet on a market that’s far from 50%”.

(That approx only breaks down when you are the one moving the market price a bunch—since you're pushing it to a new probability, now you're doing something that looks like classical forecasting. But the vast majority of trades are at/near market price. To put it most simply—brier score doesn't care whether you were on the right or wrong side of the market price. But that's also the question that matters most!)

(perhaps i can say this without shame as AFAIK my brier score/calibration app page is rather good at least compared to other accounts i've checked. but i cannot overstate how little that tells you about anything)

The ~only meaningful metric for prediction market success is profit. People find that frustrating because it’s true that profit is (1) super noisy, & (2) doesn’t always track “successful forecasting” all that well ("good trader" is correlated with but distinct from "good forecasting"). That’s true, but unavoidable. (No shade to the calibration app, which is very neat, but “brier score for prediction markets” is something of a misunderstanding that keeps popping up. including on the about pages for the site itself!)

@Ziddletwix (so I do hope you also check the correlations with some measure of profit, which I know annoys people for the stated reasons but it does at least partially track good forecasting whereas manifold brier score has no relationship. and it goes without saying that brier score is just fine when you are actually forecasting—but prediction market trading != forecasting)

@Ziddletwix Many thanks, I thought this is exactly what Brier accounts for but playing with some models, I see you're right. I feel like it should be easy to adjust for the effect you mention by calculating a function that would change it so that coin-flipping is punished at all levels equally. I can't code, unfortunately, but in case anyone would like a guaranteed spot in Valhalla, the code for the calibration app is here :) https://github.com/sufftea/manifold_calibration

@Ziddletwix I considered using (profit / number of bets) but I didn't find a simple way to count bets. I also assumed profit includes mana you bought for dollars but I'm not sure now, when I come to think of it.

@Ziddletwix The only thing I'd dispute is the claim that brier score has no relationship to good forecasting. Although the effect of selecting bets that are far from 50% seems important - if you assume that good and bad forecasters choose to bet on markets that are far from 50% at the same rate, then 100% of the variance in Brier score should be forecasting ability, right (plus random variance, which we can ignore, as it biases the correlation in both directions equally)?

Although the effect of selecting bets that are far from 50% seems important - if you assume that good and bad forecasters choose to bet on markets that are far from 50% at the same rate, then 100% of the variance in Brier score should be forecasting ability, right

So I don’t think this is quite right—what you’re describing is whether good bettors are more likely to participate in markets where the market price is accurate. That is not a safe assumption—in fact, the goal of a good bettor is to identify bad market prices and to take the action on the right side =).

Super high level, Brier score is largely irrelevant for prediction markets because it evaluates a probability. When you are betting, you (typically) don’t set the probability, you “take” the market price as it is. The only question that matters is whether you take the right side of the market price—that’s what “predictive skill” means in the context of prediction markets. (TL;DR: forecasting -> I pick a probability, betting -> I take a probability and have to pick the "right side" that is +EV).

Brier score sees a bet occur at 10%, and compares it to the outcome. If it resolves YES, that’s a .9^2 (very bad!), if it resolves NO, it’s .1^2 (very good!). But it does not care whether you bet YES or NO. This is a natural way to grade the forecast of 10%. It’s not relevant to “was YES a good bet”.

How would you evaluate if it was a good bet? Well, if you bet YES, you shouldn't penalize the bet for resolving YES (despite that 0.9^2 distance in Brier score). You should reward it by how much those shares increased in value—that's the definition of profit.

(The exception is the extent to which the bettor does set the market price, rather than take it. In that case, a Brier score would be relevant. In practice, for sufficiently liquid prediction markets, that should be a very small effect, no single bettor should have much influence on the market price.)

I considered using (profit / number of bets) but I didn't find a simple way to count bets. I also assumed profit includes mana you bought for dollars but I'm not sure now, when I come to think of it.

Net worth includes the mana you buy, but profit doesn't. You should be able to get "number of bets" from that same calibration site. But again, FWIW, "raw profit is all that matters" is a frustrating but inevitable rule. You can divide by bets, but you are then penalizing people who consistently bet with small, positive +EV margins (which is in fact the most skillful form of betting!). (If you want to control for activity, I think it's safer to control for "how long you've been on the site", but FWIW any attempt to adjust "raw profit" will introduce bad/weird incentives.)

@Ziddletwix If I managed to get a variable called Average distance of probability before a bet from 50%, I could just use it as a moderator variable in the regression I'll run. I'd still need to figure out how to run codes but that might work, right?

@Ziddletwix (for any random readers of this thread, these ideas don't change anything about the resolution criteria, I'm talking purely about the subsequent use of this data for research purposes)

@Ziddletwix Ha, I managed to program that with Copilot without understanding much of the code but it seems to work, so I'll incorporate it into the post! Thanks for the feedback

If I managed to get a variable called Average distance of probability before a bet from 50%, I could just use it as a moderator variable in the regression I'll run. I'd still need to figure out how to run codes but that might work, right?

So two things. (1) however you adjust the market price the bet is placed at, it will not address this more basic challenge:

(TL;DR: forecasting -> I pick a probability, betting -> I take a probability and have to pick the "right side" that is +EV).

Any Brier score evaluates a probabiilty—a probability is primarily a function of the market itself, not the bettor.

(2) So Brier score has an intuitive decomposition (well, several, but picking one). It's the sum of (a) reliability, (b) resolution, (c) uncertainty. Unhelpful names, but (a) is just the calibration itself, (b) is "how far are the probabilities from 50%" (it's easy to be calibrated with everything being a coinflip, this rewards you for being confident as well), & (c) is just the irreducible uncertainty from noise. TL;DR it rewards you for being confident (far from 50%) and well calibrated, and balances the two.

IIUC, a new variable for the "average distance before a bet from 50%", essentially "controls for" the resolution. The issue is that the resolution (i.e. distance from 50%) is a fundamental part of predictive skill (it's why Brier score isn't just calibration), assuming that the trader picks the probability. Without it, Brier score just becomes functionally calibration (which is fine, but doesn't meaningfully compare between different people). And none of this can address the fact that traders don't typically express their beliefs via probability, they react to the market price and pick a side. Maybe the idea is to use the price "before" to try and address some of this, but in practice many people place their bets by setting limit orders near the market price (that's how you get the best deal on the trade!) and thus this just measures "the brier score of markets they participate in" and is irrelevant to "how good they are at trading".

No evaluation of prediction market trading can be meaningful unless you care about which side of the bet they took (and did the market resolve in that direction).

@Ziddletwix I'm not sure I get you. I think what I would be doing would be equivalent to working with Brier Skill Score. I.e. Manifold as a whole is very calibrated, so we should assume that for every bet, there's ~50% probability that the true probability is higher and a 50% probability it's lower. Therefore, whatever is the "probability before a bet" should work as our default, 50 % - if you're betting randomly, you'll get the Brier score of the market, allegedly 0.107. If you make very bold predictions, they will be those that are far from the "consensus" - probability before a bet, not far from 50% - and this is entirely dependent on the correct side. Is there something I'm missing?

@Ziddletwix In case you'd feel like it might be easier to discuss the problems with my suggested approach over a videocall, I'd be very grateful for it, feel free to DM me

Our survey is currently stagnating at 23 responses, what a leverage for any brave respondent!

https://forms.office.com/r/nvUjHHCrxH